The relationship between artificial intelligence (AI) and optical modules is one of mutual acceleration and fundamental dependence. As AI models grow in size and complexity, they demand unprecedented levels of computing power, which in turn requires massive amounts of data to be moved quickly and efficiently between thousands of interconnected GPUs. Optical modules—the devices that convert electrical signals into optical signals and vice versa—have become the critical enablers of AI infrastructure, determining not only the performance of AI clusters but also their scalability and cost-effectiveness.

1. AI Drives Explosive Demand for Optical Modules

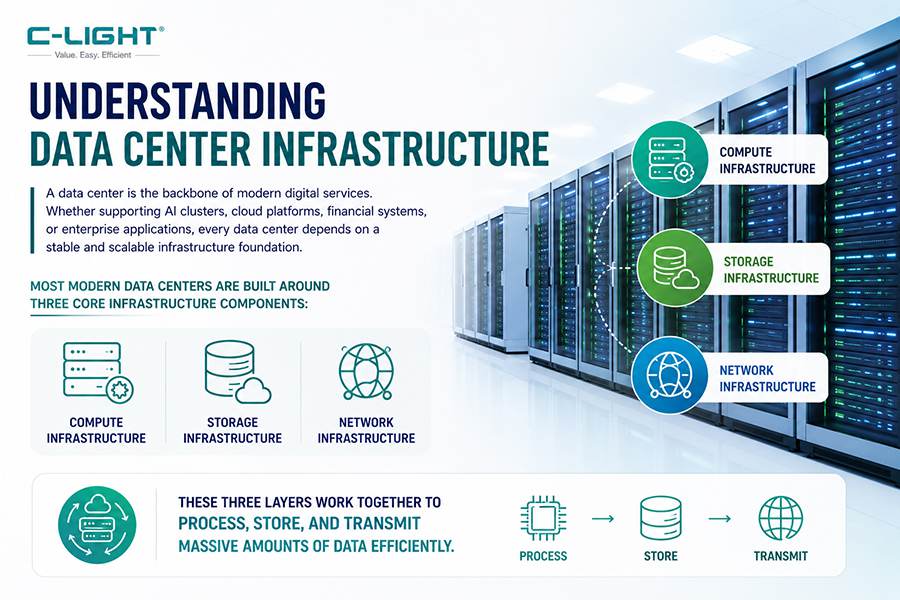

AI's insatiable appetite for computing power translates directly into demand for high-speed optical connectivity. In an AI data center, thousands of GPUs must communicate with each other constantly during both model training and inference. This inter-GPU communication relies almost entirely on optical links. According to TrendForce research, high-speed interconnect technology has become the key factor determining the performance ceiling and scalability of AI data centers.

The growth trajectory is striking. In 2025, global shipments of optical transceivers at 800G and above reached 24 million units. In 2026, this figure is expected to nearly triple to 63 million units—a growth rate of 2.6 times year-over-year. According to LightCounting, the combined market size for 800G and 1.6T optical modules is projected to reach $14.6 billion in 2026, accounting for approximately 64% of the total optical module market.

Several factors drive this explosive demand. The inference phase of AI workloads demands three to five times more optical modules than the training phase, making inference the core engine of industry growth. Hyperscale cloud providers are leading the charge, with Meta, Google, Microsoft, and Amazon collectively increasing their capital expenditures by an estimated 50% year-over-year to $333.8 billion in 2025. Meta alone has revised its 800G module requirements from 6 million to over 10 million units, while Amazon's demand has reached 5.5 million units, driven by its self-developed ASIC deployments.

2. Optical Modules Are Essential to AI Cluster Architecture

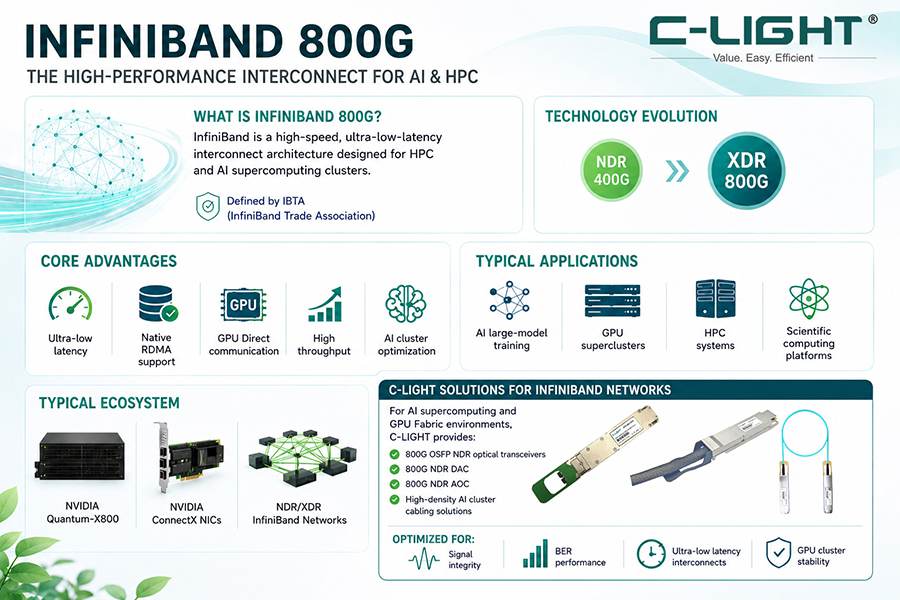

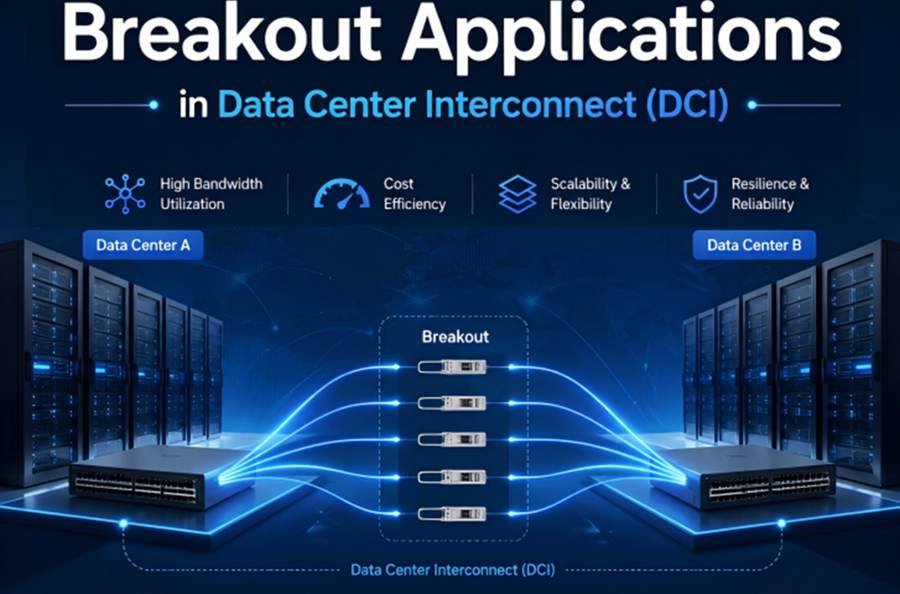

Understanding the relationship requires appreciating the scale of connectivity inside an AI cluster. In a modern AI data center, GPUs are organized into racks, pods, and superpods, with optical modules providing the links at every level. At the rack level, GPUs communicate via NVLink and NVSwitch at terabit-per-second speeds. To connect multiple racks into pods, data centers use InfiniBand and Ethernet architectures that provide 800Gbps bandwidth.

NVIDIA's Quantum-X800 InfiniBand platform, for example, supports 144 ports per switch at 800Gb/s each, enabling massive GPU clusters to be built with fewer switching layers—reducing latency and complexity. The platform includes NVIDIA's photonic switches that integrate silicon photonics directly into the switch package, delivering 3.5 times better energy efficiency and 10 times better reliability compared to traditional optical modules.

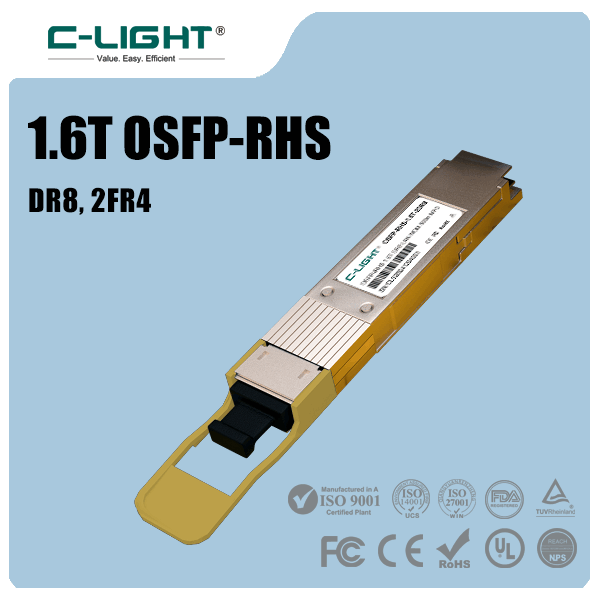

The demand density scales with cluster size. A single GB200 server requires 162 1.6T optical modules to ensure efficient data transmission across its network fabric. As AI clusters grow from thousands to tens of thousands of GPUs, the number of optical modules required increases exponentially.

3. AI Accelerates Optical Module Technology Evolution

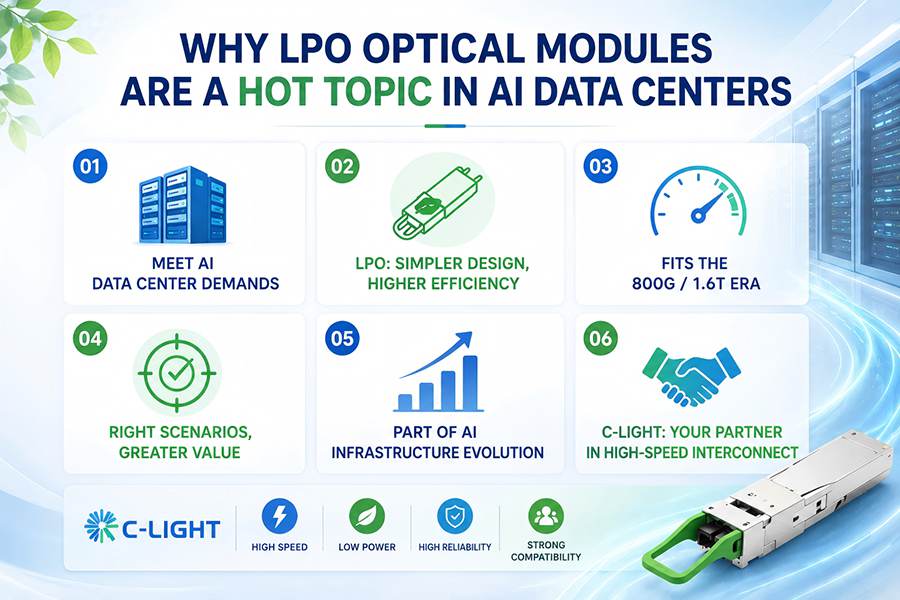

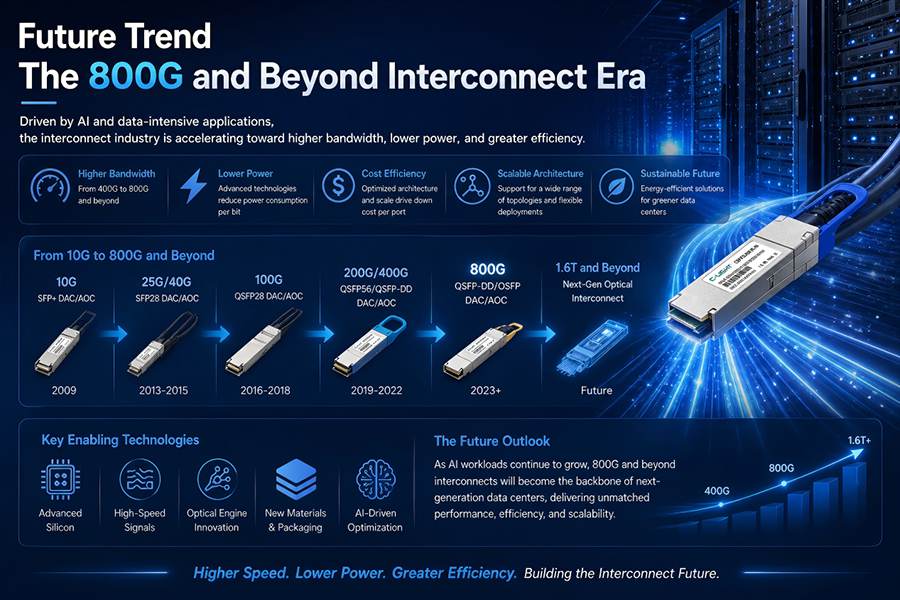

Before the AI era, optical module speed doubled approximately every four years. AI has compressed that cycle dramatically. Starting in 2023, the upgrade cycle from 400G to 800G to 1.6T has shortened to roughly two years-7. The industry is now in full transition from 400G to 800G as the mainstream technology, with 1.6T entering commercial deployment in 2026 and 3.2T expected to begin ramping from 2028 onward.

Several key technologies are being accelerated by AI demands:

Silicon Photonics: AI clusters require ever-lower power consumption per transmitted bit. Silicon photonics has emerged as the mainstream technology for 800G and 1.6T modules, offering advantages in power efficiency and cost. Silicon photonics currently accounts for 50–70% of the 800G/1.6T market. Major players have achieved 95% yields on self-developed silicon photonics chips, reducing costs by 30% compared to traditional approaches.

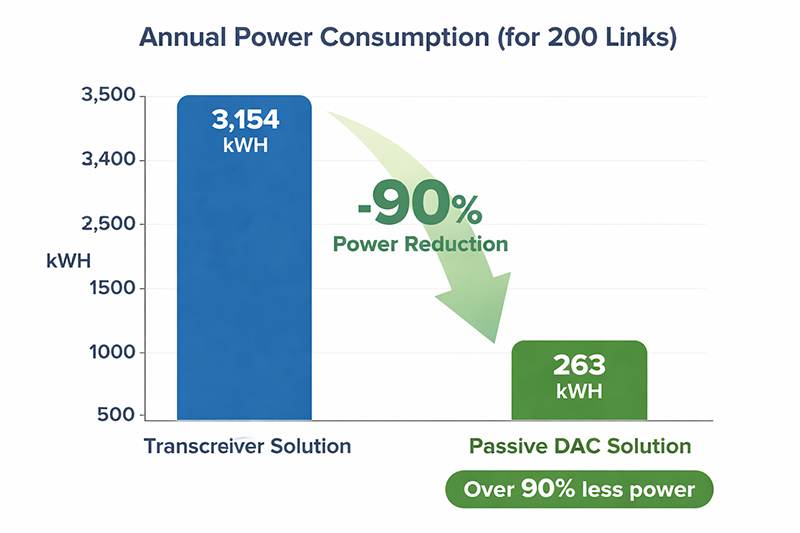

Linear Direct Optics (LPO): By removing the DSP chip from the optical module, LPO technology can reduce power consumption by up to 50% compared to traditional modules. LPO modules are particularly attractive for AI data centers, where power density is already a major constraint. LPO revenue is expected to account for approximately 15% of the optical module market, becoming a significant growth segment.

Co-Packaged Optics (CPO): This technology integrates the optical engine directly with the switching ASIC, reducing the electrical signal path from 15–30 centimeters to less than 1 centimeter. This can reduce 800G optical module power consumption by over 85%. CPO is expected to achieve over 20% penetration in AI data centers by 2026, positioning it as a next-generation optical interconnect solution.

4. The Interdependent Economic Relationship

Optical modules are not just a supporting component; they represent a significant portion of AI infrastructure investment. In optical communication systems, optical modules account for over 50% of system equipment costs. As AI capital expenditures continue to rise—with global cloud provider capex projected to maintain an upward trajectory through 2026—the optical module industry benefits disproportionately.

By 2029, the global optical module market is expected to exceed $41.5 billion, with AI data centers serving as the core growth driver. The industry is experiencing a virtuous cycle: more powerful AI models require more compute, which requires more GPUs, which requires more and faster optical interconnects. Each new generation of GPUs pushes optical module speeds higher, and each improvement in optical module technology enables larger, more capable AI clusters.

5. C-LIGHT: Enabling AI Infrastructure with High-Performance Optical Modules

C-LIGHT (Shenzhen C-Light Network Communication Co., Ltd.), founded in 2011 with 15 years of experience in fiber optic network products, has positioned itself as a key supplier to the AI infrastructure market. The company's product portfolio spans from 10G to 800G, addressing the diverse connectivity requirements of AI computing clusters.

800G Optical Modules for AI Clusters

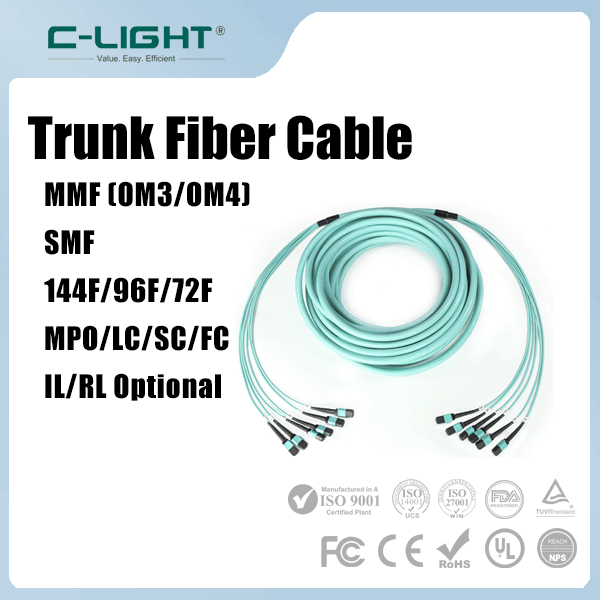

C-LIGHT's 800G OSFP SR8 100m InfiniBand optical transceiver is specifically designed for AI clusters and supercomputing centers, achieving an 800Gbps transmission rate based on OSFP packaging and the InfiniBand NDR protocol. Using 850nm VCSEL lasers and dual MPO-12 interfaces, it supports 100-meter low-latency transmission through OM4 multimode fiber, with power consumption below 16W in the 0 to 70°C temperature range.

The company's 800G OSFP product line covers multiple transmission distances, including VR8 50m, SR8 100m, DR8 500m, and 2×FR4 2km, supporting transmission rates up to 800Gb/s. These modules are widely applicable to data centers, cloud computing networks, high-performance computing, telecommunications markets, and enterprise applications. All products are compatible with over 100 switch brands, providing flexibility for AI infrastructure builders.

Comprehensive AI Connectivity Portfolio

Beyond 800G modules, C-LIGHT offers:

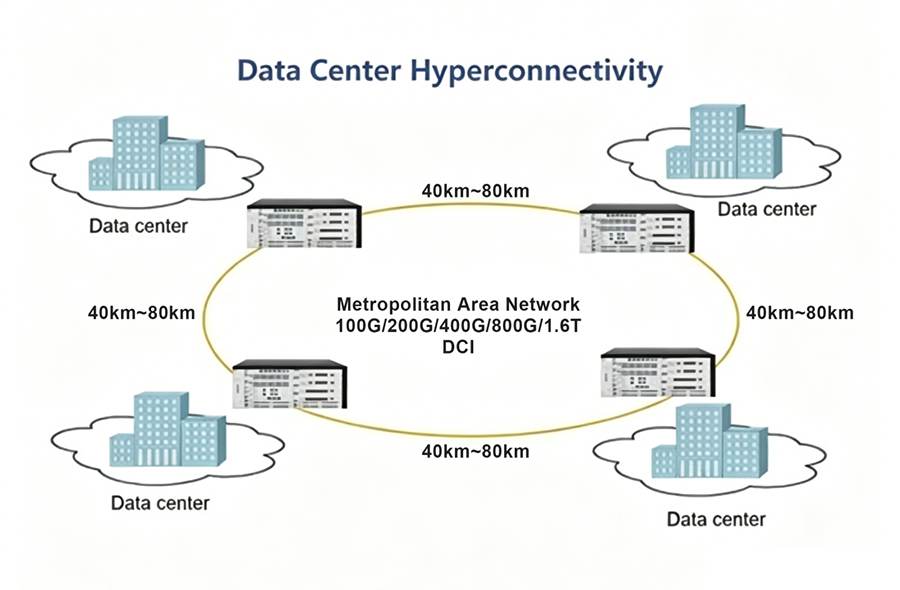

400G QSFP-DD FR4 optical transceivers for 400GbE applications over 2km single-mode fiber, suitable for data center interconnect scenarios

DWDM coherent transceivers covering rates from 100G DCO to 800G DCO, with transmission distances ranging from 120km to 2000km

Active Optical Cables (AOC) and Direct Attach Cables (DAC) for short-reach high-speed connections within AI server racks

High-performance Layer 3 switches such as the S5860 series, designed for high-density, high-bandwidth scenarios in enterprise networks and data centers

Quality and Supply Chain Advantages

With over 15 years of manufacturing experience, C-LIGHT maintains rigorous quality control, including 100% product testing across three quality control management stages. The company's mainstream products maintain permanent rolling inventory, enabling 90% of products to be shipped within 2 to 3 days. All modules come with a three-year warranty and long-term technical support-43.

Conclusion

The relationship between AI and optical modules is fundamentally symbiotic. AI drives the need for ever-faster, more efficient optical connectivity, while advances in optical module technology enable larger, more powerful AI clusters. As the industry transitions from 800G to 1.6T and beyond, optical modules will remain at the heart of AI infrastructure, determining not just how fast AI models can be trained and served, but how cost-effectively the entire ecosystem can scale. Companies like C-LIGHT, with their comprehensive portfolio of high-speed optical transceivers and complementary products, are essential partners in building the optical networks that power the AI revolution.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>