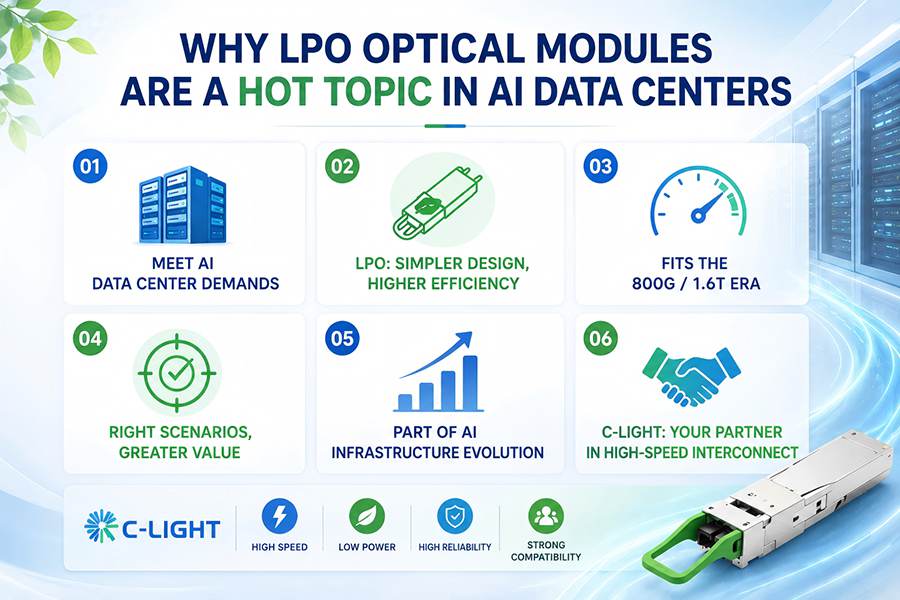

The competition in AI data centers is no longer defined solely by GPU computing power. Today, it is a comprehensive race involving compute performance, interconnect architecture, and energy efficiency. As large-scale AI model training continues to expand, the volume of data exchanged between nodes is growing exponentially. Traditional high-speed optical transceivers are gradually approaching their limits in terms of power consumption, thermal management, and overall system complexity. Against this backdrop, Linear Pluggable Optics (LPO) has evolved from a niche technology option into a practical solution for next-generation AI infrastructure.

The core value of LPO lies in simplifying or relocating some of the complex electrical signal processing functions traditionally embedded inside optical modules. By shortening the signal path and reducing unnecessary processing overhead, LPO enables lower power consumption, lower latency, and improved transmission efficiency. For AI data centers, this translates into reduced thermal design pressure, higher rack density, and the ability to deliver more effective bandwidth within the same power budget.

In other words, LPO is not simply solving a component-level problem — it is addressing the efficiency challenges of the entire AI networking infrastructure.

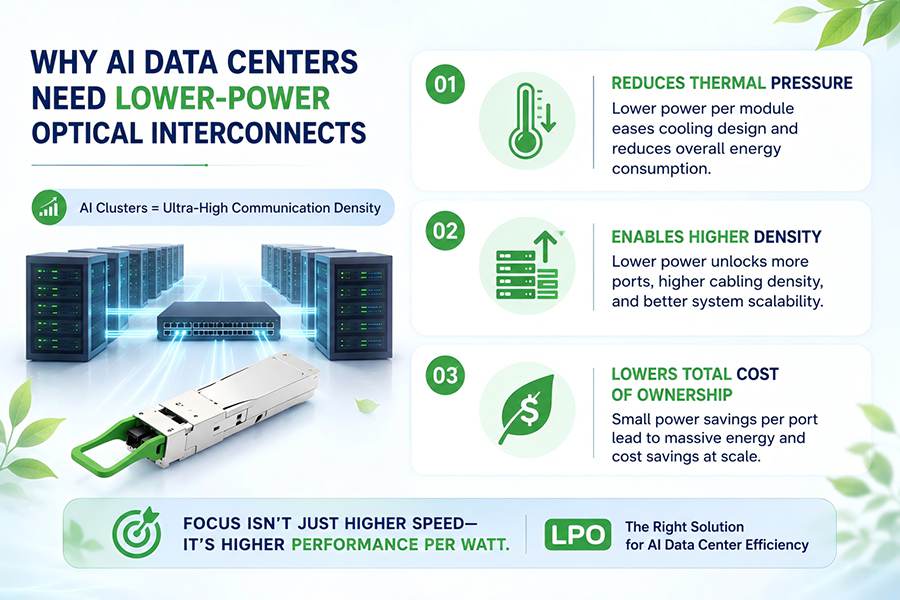

1. Why AI Data Centers Need Lower-Power Optical Interconnects

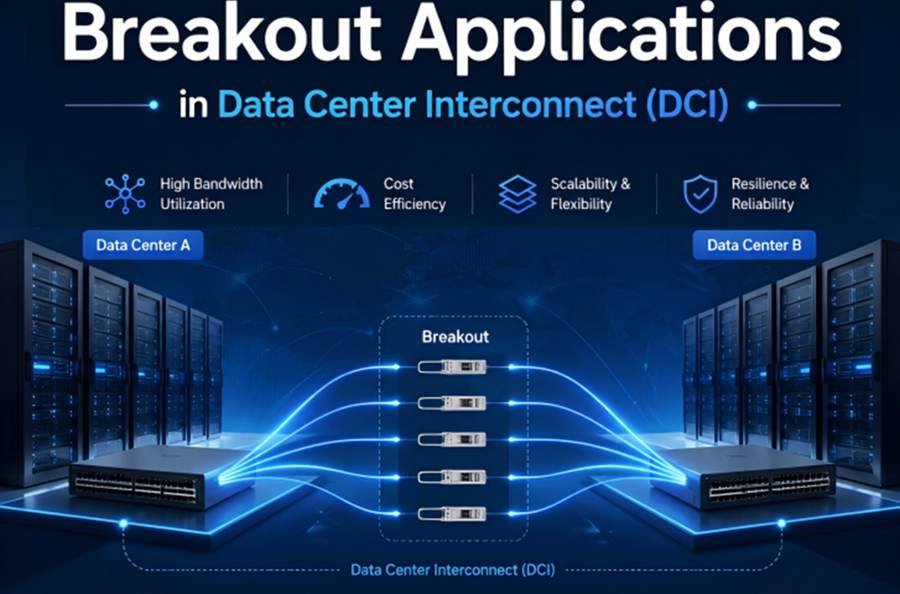

AI training clusters are characterized by extremely high communication density. Whether using model parallelism, data parallelism, or hybrid parallelism, GPUs, switches, and storage systems must continuously exchange massive volumes of data.

Although traditional high-speed optical modules can provide sufficient bandwidth, increasing transmission speeds also drive module power consumption significantly higher. This creates three major challenges:

●Increasing Thermal Pressure

Higher module power consumption leads to greater cooling requirements for switches and racks, directly increasing total data center energy consumption.

●Limited Deployment Density

As per-port power continues to rise, switch port density, cabling density, and overall system scalability become increasingly constrained.

●Rising Total Cost of Ownership (TCO)

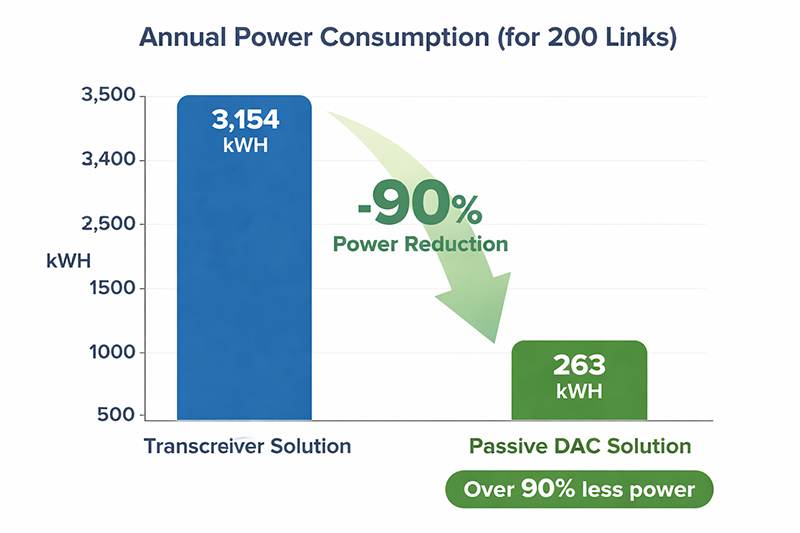

For hyperscale AI clusters, even saving a few watts per port can result in substantial energy savings when multiplied across thousands or even tens of thousands of ports.

As a result, AI data centers are no longer focused solely on achieving higher transmission speeds. Instead, the industry is pursuing higher-speed networking with better power efficiency and overall cost-performance balance. This is precisely where LPO addresses a critical pain point.

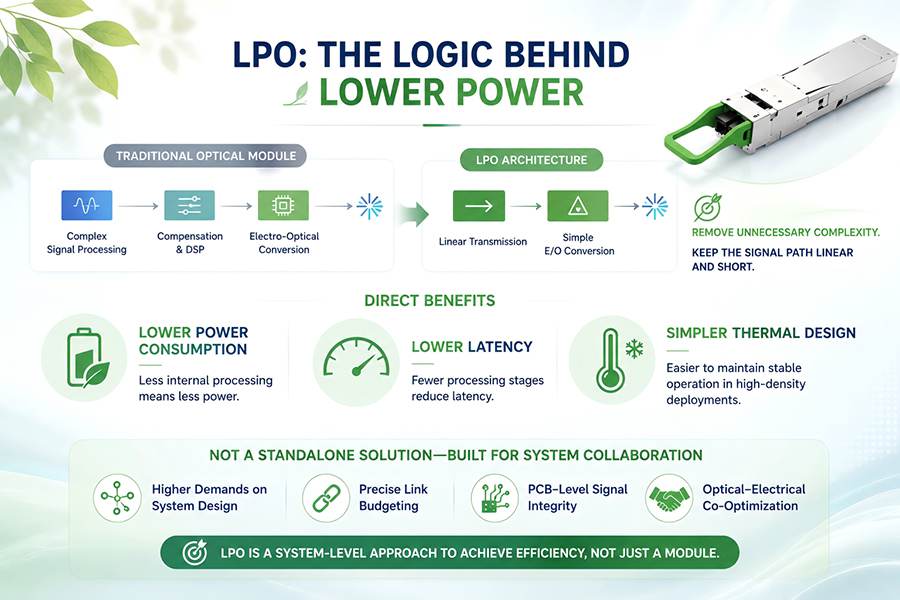

2. The Technical Logic Behind LPO: Why It Consumes Less Power

The design philosophy of LPO is not to make optical modules more complex, but rather to remove unnecessary complexity from within the module itself.

Traditional pluggable optical transceivers typically rely on extensive internal electrical signal processing and compensation functions. In contrast, LPO emphasizes a more linear transmission architecture, enabling a simpler electro-optical conversion path across shorter links.

This architecture provides several direct advantages:

Reduced power consumption by minimizing internal signal processing overhead

Lower latency through fewer signal conditioning stages

Simplified thermal design, improving stability in high-density deployments

However, LPO is not a universal solution.

Because it relies on a more linear transmission model, it places higher requirements on system-level design, including:

Link budget optimization

PCB-level signal integrity

Co-design between optical and electrical components

End-to-end system tuning

Therefore, LPO should not be viewed as an isolated product category. Instead, it represents a networking architecture philosophy centered around deeper system-level collaboration.

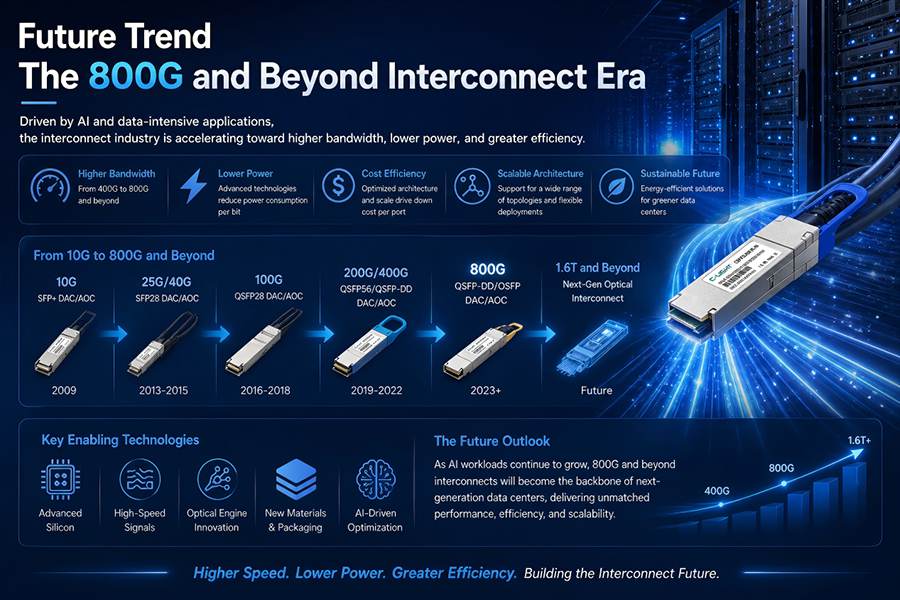

3. Why LPO Is Rapidly Gaining Attention in the 800G and 1.6T Era

Historically, the optical transceiver market focused primarily on higher bandwidth, longer transmission distances, and stronger compatibility.

But as 800G enters large-scale deployment and 1.6T technologies move toward commercialization, industry priorities are shifting dramatically:

The focus is no longer simply whether a system can reach a certain speed, but whether it can operate at that speed efficiently, reliably, and with lower power consumption.

LPO is gaining momentum for three key reasons:

AI Traffic Patterns Favor Short-Reach, High-Density Interconnects

Many AI training networks do not require all the functions designed for traditional long-distance transmission environments. Instead, they benefit more from optimized short-reach, low-latency interconnect architectures.

Power Consumption Has Become a Critical Constraint

As port counts scale rapidly, total power budgets become more important than peak per-port bandwidth alone.

The Industry Is Seeking More Sustainable Technology Paths

In certain applications, LPO provides a better balance among power efficiency, cost, and performance.

This is why the rise of LPO is not simply a marketing trend — it is being driven by real-world AI infrastructure demands.

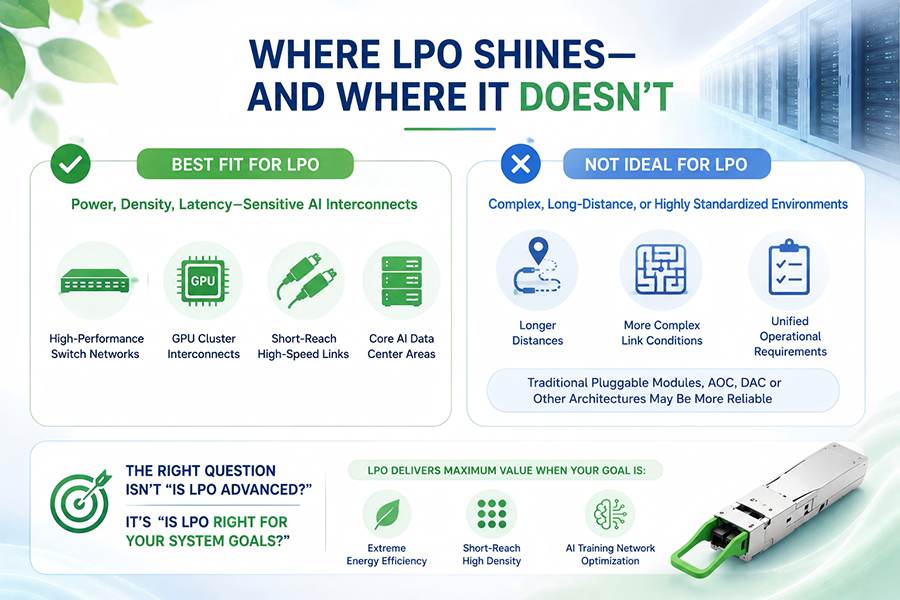

4. Where LPO Fits Best — and Where It Does Not

From an application perspective, LPO is particularly well suited for AI interconnect environments that are highly sensitive to:

Power consumption

Deployment density

Latency

Thermal efficiency

Typical scenarios include:

High-performance switching fabrics

GPU cluster interconnects

Short-reach ultra-high-speed links

Core AI data center networking environments

However, LPO is not ideal for every deployment scenario.

For environments involving:

Longer transmission distances

More complex link conditions

Highly standardized operational requirements

traditional pluggable optical transceivers, AOC, DAC, or other architectures may still offer a more stable and practical solution.

The value of LPO lies in scenario-specific optimization rather than universal replacement.

For network architects and procurement teams, the key question is not whether LPO is technologically advanced, but whether it aligns with current infrastructure goals. If the objective is ultra-high energy efficiency, short-reach high-density networking, and AI training optimization, LPO becomes extremely attractive.

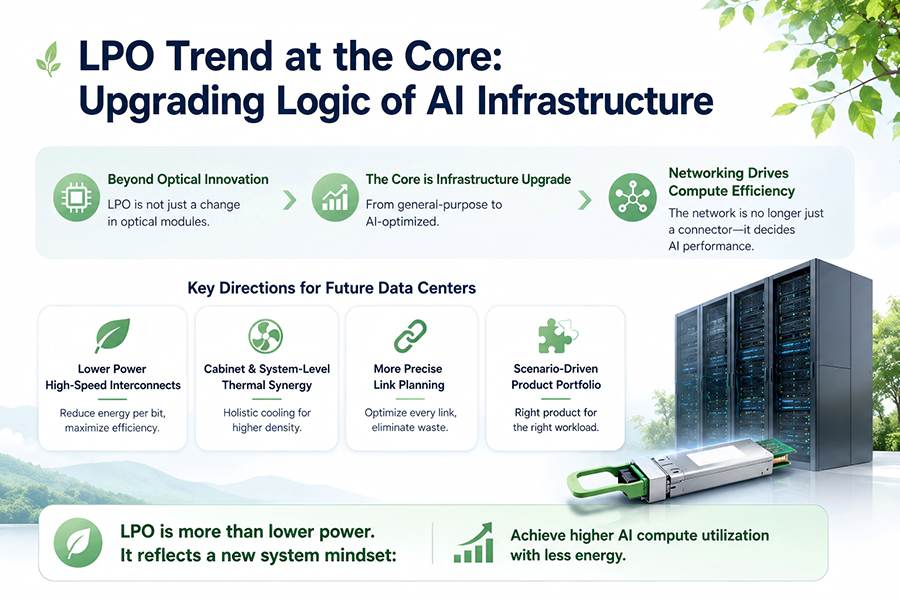

5. The Rise of LPO Reflects the Evolution of AI Infrastructure

On the surface, LPO appears to be another shift in optical transceiver technology. In reality, it reflects a broader transformation in AI infrastructure architecture.

Traditional data centers emphasized general-purpose compatibility and standardization. Modern AI data centers increasingly prioritize workload-specific optimization.

Today, the network layer is no longer just connecting devices — it directly influences computing efficiency and AI cluster utilization.

Future data center development will increasingly focus on:

Lower-power high-speed interconnect products

Rack-level and system-level thermal coordination

More refined link planning

Scenario-oriented infrastructure design rather than one-size-fits-all hardware

In this sense, LPO is becoming a hotspot not simply because it saves power, but because it represents a new infrastructure philosophy:

Achieving higher AI computing efficiency with lower overall energy consumption.

6. C-LIGHT’s Value in High-Speed AI Interconnect Solutions

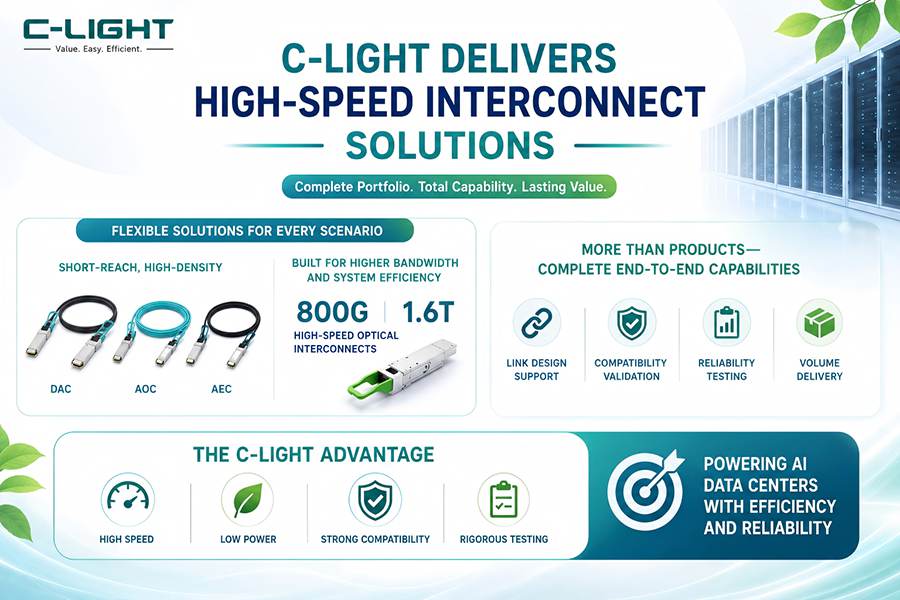

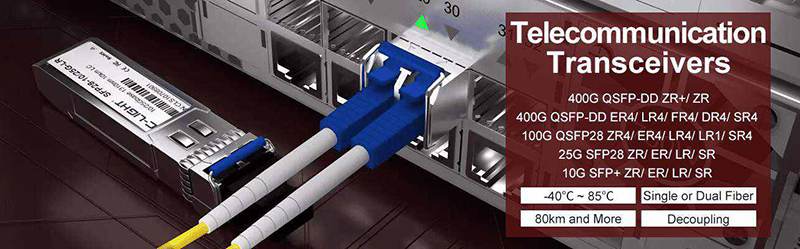

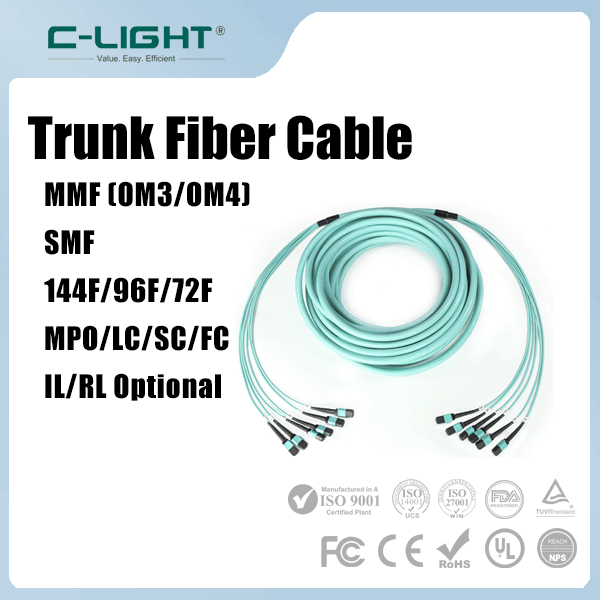

As AI data center interconnect architectures continue to evolve, C-LIGHT can provide a more comprehensive portfolio of high-speed connectivity solutions tailored to different deployment scenarios.

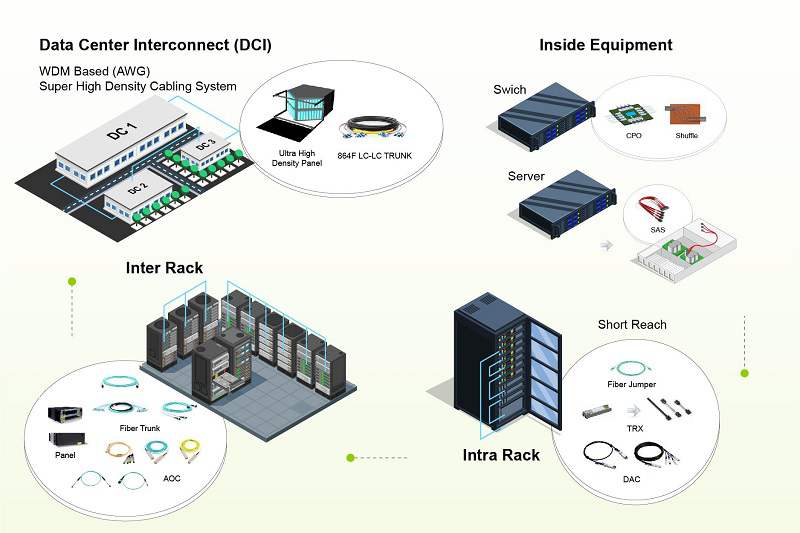

For high-density short-reach interconnects, flexible configurations can be built using:

DAC (Direct Attach Cable)

AOC (Active Optical Cable)

AEC (Active Electrical Cable)

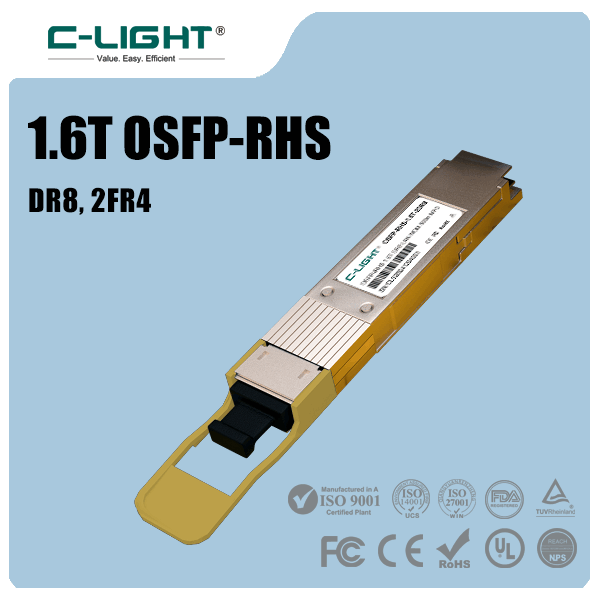

For environments requiring higher bandwidth and improved system efficiency, C-LIGHT is also actively expanding its high-speed optical interconnect portfolio for the 800G and 1.6T era.

For enterprise customers, long-term value is not defined by a single product alone, but by complete end-to-end capabilities including:

Link architecture design

Compatibility validation

Reliability testing

Large-scale delivery support

C-LIGHT’s strengths are reflected in its integrated support for:

High-speed networking

Low-power designs

Strong compatibility

Rigorous testing standards

As LPO and next-generation optical interconnect technologies continue to mature, suppliers with comprehensive product portfolios and strong validation capabilities will be better positioned to establish long-term competitiveness in the AI data center market.

Conclusion

The rapid rise of LPO optical transceivers in AI data centers is not accidental.

It is the direct result of growing demand for lower-power, higher-density, and lower-latency interconnect solutions within AI clusters. More importantly, it reflects the industry's transition from a “performance-first” mindset to an “efficiency-first” networking strategy.

For enterprises planning next-generation AI infrastructure, LPO is not merely another technical buzzword. It is a key to understanding the future evolution of data center architecture.

As the industry transitions from 800G toward 1.6T and AI computing demand continues to surge, organizations that can best balance performance, energy efficiency, and reliability will be in the strongest position during the next wave of infrastructure transformation.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>