1. Introduction

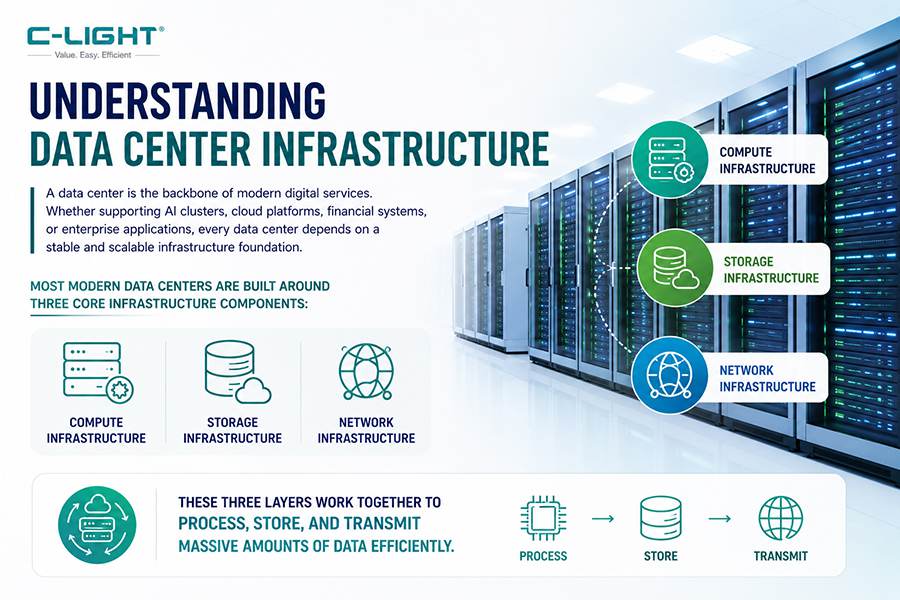

With the explosive growth of AI large model training, high-performance computing, and cloud services, the power density of single data center racks continues to surge. Currently, the rack power density of AI computing clusters has jumped from the traditional 5-10 kW to over 40 kW, with some high-end AI racks even reaching 100 kW or 120 kW. The Thermal Design Power (TDP) of NVIDIA's Rubin GPU is expected to increase from 1.8 kW to 2.3 kW, already exceeding the cooling capacity limits of traditional cold plate liquid cooling systems. Against this backdrop, liquid cooling technology is transitioning from an "optional configuration" to a "mandatory requirement," becoming an indispensable core infrastructure for intelligent computing centers.

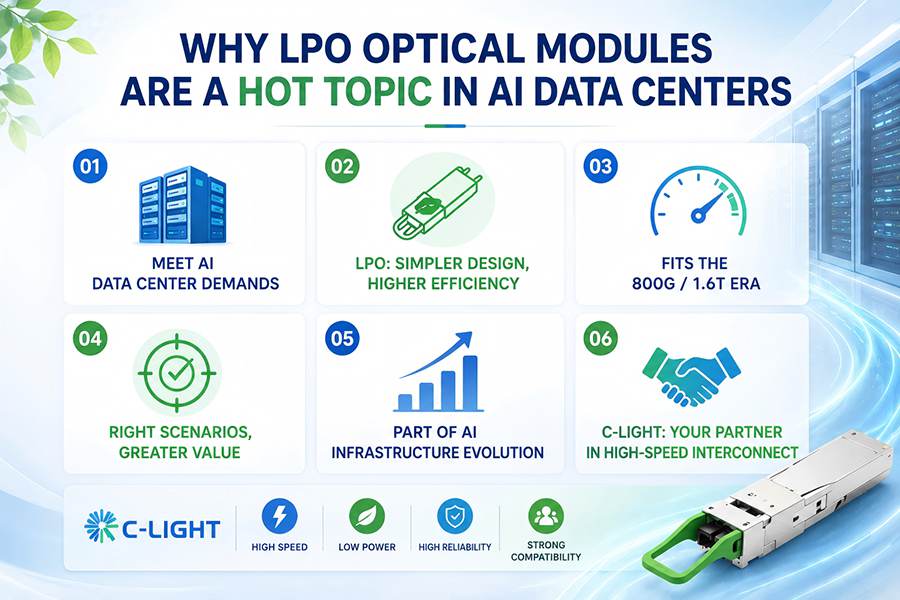

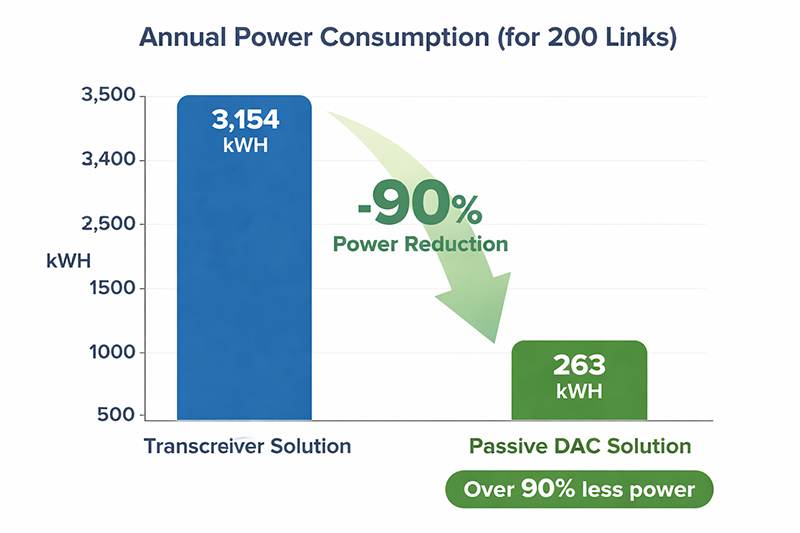

As core components of data center network interconnections, optical transceivers are also experiencing significant increases in power consumption as data rates rise: 400G transceivers consume approximately 12W, 800G transceivers consume around 18W, and 1.6T transceivers further increase to 24W. It is anticipated that future 3.2T transceivers will exceed 40W. When optical transceiver power consumption reaches 32W, traditional dry-contact air cooling methods can result in hotspot temperature differentials as high as 25.6°C, far exceeding the normal operating temperature thresholds of the equipment. Consequently, optical transceiver cooling solutions are undergoing a systemic migration from air cooling to liquid cooling.

Shenzhen Chengguang Network Communication Co., Ltd. (C-LIGHT), established in 2011, possesses 15 years of experience in optical transceiver R&D and manufacturing. Its product portfolio covers a full range of high-speed optical transceivers including 800G, 2×400G, 400G, 200G, 100G, 50G, 25G, and 10G. C-LIGHT's product lines are widely deployed in AI computing clusters and data center networks, providing a rich selection of optical interconnect solutions for liquid-cooled data centers. This article will systematically introduce the application solutions and adaptation characteristics of C-LIGHT's 25G/100G/400G/800G series optical transceivers within liquid-cooled data center environments.

2. Background of Data Center Liquid Cooling Technology

2.1 Overview of Liquid Cooling Technology Routes

Current data center liquid cooling technologies are primarily categorized into three main types: Cold Plate Liquid Cooling, Immersion Cooling, and Spray Cooling. Cold plate liquid cooling is the absolute mainstream in the current market, accounting for over 80% of liquid cooling data center applications. This technology involves installing liquid-cooled cold plates onto heat-generating chips such as CPUs and GPUs, utilizing the flow of liquid within the cold plate to carry away heat. It is an indirect-contact type of liquid cooling. Its core advantages lie in strong compatibility and low retrofit costs, with PUE values controllable between 1.1 and 1.2. Current NVIDIA GB200 and GB300 series AI servers all utilize cold plate liquid cooling solutions, covering core components such as CPUs, GPUs, and memory.

Immersion cooling involves completely submerging servers in insulating coolant fluids, such as electronic fluorinated fluids or mineral oils. It achieves efficient heat dissipation through direct contact between the liquid and heat-generating components, enabling extreme energy efficiency with PUE values below 1.05. This technology is widely recognized as the core direction for future high-density computing scenarios, particularly suitable for supercomputing and general computing environments.

2.2 Liquid Cooling Market Size and Trends

The global data center liquid cooling market is experiencing a period of rapid growth. According to predictions by Sealand Securities, based on an average AI chip price of $1,376 per unit, the overall data center liquid cooling market size is expected to reach $16.5 billion (approximately 116.2 billion RMB) by 2026, with a compound annual growth rate (CAGR) of about 59% from 2025 to 2026. In the North American market, the liquid cooling market size is projected to reach $10 billion by 2026, while the domestic Chinese market is expected to reach 11.3 billion RMB in the same year. TrendForce projects that the penetration rate of liquid cooling in AI data centers will reach 40% by 2026 (compared to only 14% in 2024).

3. Overview of C-LIGHT Optical Transceiver Product Portfolio

3.1 25G SFP28 Series

C-LIGHT's 25G SFP28 series optical transceivers utilize the SFP28 form factor, supporting a data rate of 25 Gbps and covering a full range of application scenarios from short-reach to long-haul transmission. Primary specifications include: 25G SR (100m over MMF), 25G LR (10km over SMF), 25G ER (40km over SMF), 25G BiDi (10km to 60km over single-fiber bidirectional), and 25G DWDM tunable wavelength products. This series complies with the IEEE 802.3cc communication protocol, SFF-8431/8432/8472 interface protocols, and the SFP28 MSA form factor protocol, ensuring excellent interoperability and compatibility.

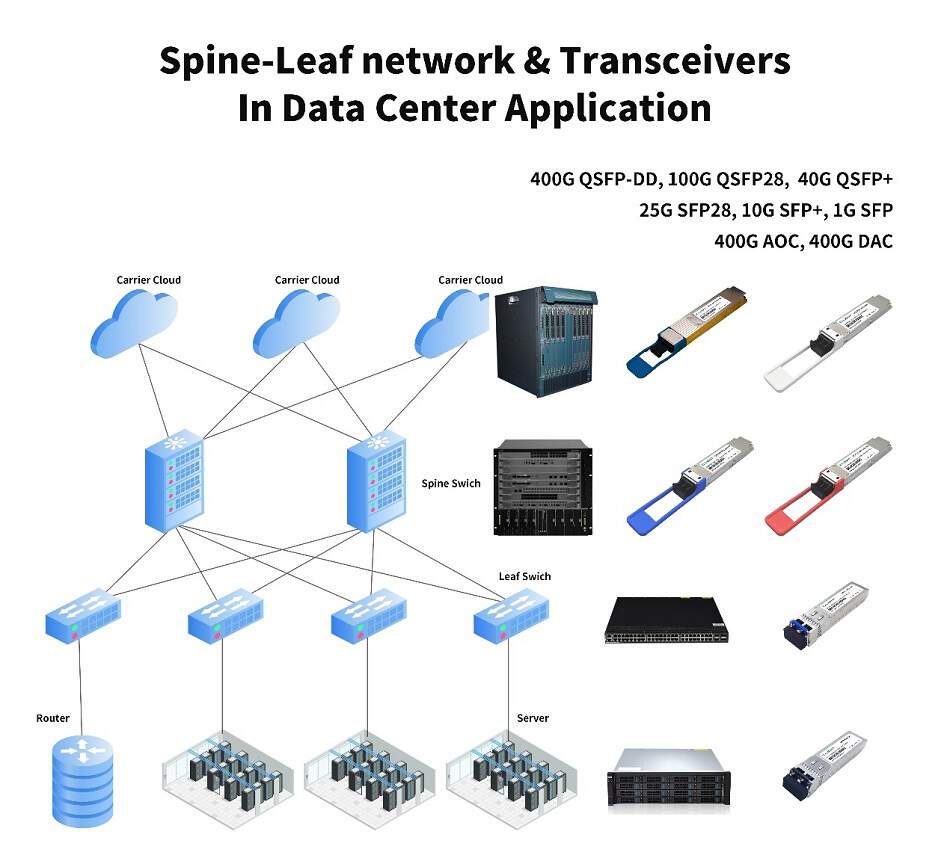

3.2 100G QSFP28 Series

C-LIGHT's 100G QSFP28 series optical transceivers utilize the QSFP28 form factor, offering a variety of transmission distance options. Key specifications cover: 100G SR4 (100m over MMF, MPO connector), 100G PSM4 (2km over SMF, MPO connector), 100G LR4 (10km over SMF, LC connector, 1310nm wavelength), 100G ZR4 (80km over SMF, LC connector), and 100G Single Lambda BiDi (40km over single-fiber bidirectional). This series is widely applied in intra-rack data center interconnects, Spine-Leaf network architectures, and metro access layer scenarios.

3.3 400G QSFP-DD/OSFP Series

C-LIGHT's 400G series optical transceivers support both QSFP-DD and OSFP mainstream form factors, employing 4×100G PAM4 modulation technology. Primary specifications include: 400G SR8 (100m over MMF, MPO connector), 400G DR4/DR4+ (500m over SMF, MPO connector), 400G FR4 (2km over SMF, CWDM, LC connector), 400G LR4 (10km over SMF, CWDM, LC connector), 400G ER4 (40km over SMF, LAN WDM, LC connector), and 400G ZR/ZR+ coherent products (120km to over 500km, coherent DCO technology). Among these, the 400G ZR+ coherent optical transceiver, based on DCO technology supporting C-Band tunable wavelengths, provides a cost-effective long-distance transmission solution for Data Center Interconnect (DCI).

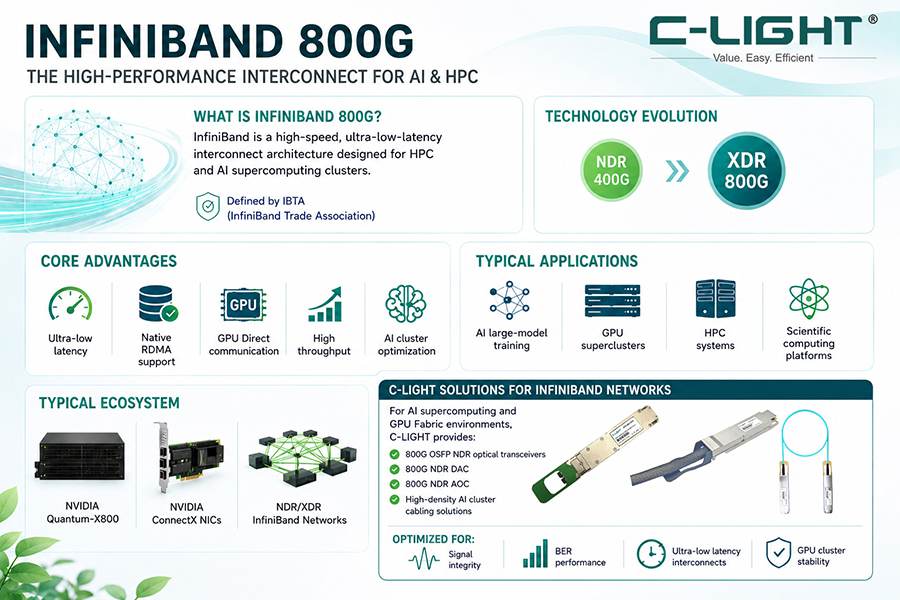

3.4 800G OSFP/QSFP-DD Series

C-LIGHT's 800G series optical transceivers are available in OSFP and QSFP-DD form factors, achieving a total bandwidth of 800 Gbps through an 8-channel electrical interface and PAM4 modulation. Primary specifications include: 800G SR8 (100m over MMF, 850nm VCSEL, 2×MPO-12/MPO-16 connector), 800G DR8/2×DR4 (500m over SMF, LAN WDM), 800G 2×FR4 (2km over SMF, LAN WDM, LC/CS connector), 800G 2×LR4 (10km over SMF, LAN WDM), and 800G ZR+ coherent products (450km ultra-long-haul transmission). This series is specifically designed for ultra-high bandwidth and low-latency requirements within AI clusters and hyperscale data center internal and interconnect scenarios.

4. Technical Challenges and Adaptation Solutions for Optical Transceivers in Liquid-Cooled Environments

4.1 Evolution of Optical Transceiver Power Consumption and Cooling Requirements

Optical transceiver power consumption exhibits a significant positive correlation with increasing data rates, driving the accelerated migration of cooling solutions from air to liquid. In liquid-cooled data center deployment scenarios, optical transceivers face multi-dimensional technical challenges, and their thermal design must be coordinated with the overall rack-level liquid cooling scheme.

Cooling Adaptation Strategies for Different Data Rate Tiers: From a power density perspective, optical transceivers rated at 400G and below typically have a power density below 15W/cm². Air cooling can still meet requirements in conventional data center environments, with liquid cooling considered a redundancy option only in high-temperature, sealed, or edge-computing extreme scenarios. 800G optical transceivers have a power density in the transition zone of approximately 15-25W/cm² between air and liquid cooling capabilities. Liquid cooling solutions offer greater advantages in high-density deployment scenarios such as AI computing clusters. C-LIGHT's product portfolio comprehensively covers requirements across all power consumption levels, allowing users to flexibly select cooling solutions based on specific deployment conditions.

4.2 Sealing and Compatibility of Optical Transceivers in Immersion Cooling Environments

Immersion cooling involves submerging the entire optical transceiver in an insulating coolant fluid, dissipating heat through comprehensive direct contact between the liquid and heat-generating components. However, this presents significant technical challenges: the optical path of the transceiver must be completely physically isolated from the coolant fluid to prevent liquid ingress that would impair optical signal transmission. Core technologies for immersion-cooled optical transceivers focus on the following aspects:

(1) Multi-Stage Sealing Structure Design: For dynamic sealing, pluggable interfaces utilize magnetic fluid seals or dual mechanical seals (e.g., silicon carbide mating rings) with leakage rates controlled below 0.1 mL/h, suitable for frequent plugging/unplugging scenarios. For static sealing, the junction between the fiber optic connector and the module housing employs perfluoroelastomer (FFKM) or modified PTFE gaskets, resistant to coolant corrosion with a seal life expectancy of up to 5 years. Critical interface areas feature dual O-rings combined with metal compression seals to establish multiple leakage prevention barriers.

(2) Hermetic Packaging and Optical Path Isolation: Through independent hermetic cavity design, core optical path components such as the optical chip and fiber interface are encapsulated within isolated sealed cavities, completely preventing coolant ingress. Simultaneously, the coolant flow path is rigidly and physically isolated from the optical signal transmission path, permitting only heat transfer to the coolant via thermal conduction. The current mainstream industry process predominantly utilizes injection molding encapsulation to fully encase the optical engine, achieving isolation from the coolant fluid.

(3) Special Materials and Surface Treatments: Fiber optic interfaces utilize anti-permeation nano-coatings. High-density encapsulation materials compatible with the coolant are selected, such as transparent housings made of polyimide with nano-coatings, ensuring optical signal transmittance while preventing coolant penetration. Optical transceiver material selection must strictly match the liquid cooling environment, passing compatibility tests to prevent chemical reactions between materials and coolant that could lead to seal failure.

(4) Factory Sealing Reliability Verification: All liquid-cooled optical transceiver products undergo 72-hour pressurized testing at 0.5 kg coolant pressure, long-term immersion testing, and pressure cycling testing prior to shipment to validate sealing stability within liquid cooling environments.

4.3 Structural Adaptation Solutions for Cold Plate Liquid-Cooled Optical Transceivers

In cold plate liquid cooling deployments, structural adaptation between the optical transceiver and the cold plate cooling system presents a significant engineering challenge. Optical transceivers are "moving parts" requiring frequent insertion and removal, while cold plates and other cooling components are fixed within the switch chassis, resulting in floating tolerances between them. Insufficient flexibility in cold plate design can lead to difficulty in inserting/removing transceivers or damage to thermal interface materials during the process.

Current mainstream industry solutions include: Heat Pipe-Assisted Water Cooling Solutions, which conduct heat from the transceiver to the cold plate via heat pipes. This offers high reliability but involves a longer thermal path, making it more suitable for lower-power scenarios. Integrated Cold Plate Solutions feature floating mechanisms at the transceiver interface location to compensate for tolerances, allowing the cold plate to directly contact the heat source. This provides a shorter thermal path and better performance, although the long-term reliability of the floating mechanism requires further validation. Additionally, Ciena's proposed flexible hose-connected independent cold plate solution utilizes hose flexibility to resolve tolerance issues and offers excellent cooling performance; however, multi-branch hoses require clamps, leading to increased leakage risks proportional to the number of branches.

For high-data-rate transceivers like C-LIGHT's 800G modules, the structural design for heat dissipation within a cold plate liquid cooling system must pay special attention to the spatial constraints of cold plate height. The pursuit of high equipment density in intelligent computing centers necessitates that cold plate height be controlled within 9mm, with reliable design heights further compressed to within 7mm.

4.4 Coolant Types and Optical Transceiver Material Compatibility

The selection of coolant directly impacts the safety and operational costs of the entire liquid cooling system and represents one of the highest value-density segments within the liquid cooling industry chain. Different types of liquid cooling systems impose distinct physicochemical performance requirements on coolants:

Water-Based Coolants: Primarily used in cold plate liquid cooling systems. The market is highly open with numerous participants, offering lower cost, but conductivity risks must be mitigated through isolation design within the cold plate.

Oil-Based Coolants: Based on organic hydrocarbon compounds, widely applied in single-phase immersion cooling systems. This segment features high technical barriers and is dominated by foreign brands, with domestic companies rapidly catching up.

Fluorinated Fluids: Considered the ideal coolant medium for data centers due to excellent dielectric properties, chemical inertness, and low Global Warming Potential (GWP). They are better suited for high-power applications with stringent cooling requirements, such as two-phase cold plates, immersion cooling, and microchannel implementations. As traditional international giants like 3M strategically exit this space due to environmental factors, Chinese companies possessing technical expertise and supply chain advantages are accelerating their rise, seizing a historic window of opportunity for "domestic substitution."

Material selection for C-LIGHT optical transceivers strictly adheres to liquid cooling environment requirements. Products undergo compatibility testing with various mainstream coolants (including imported and domestic fluorinated fluids, silicone oils, PAO, etc.) to ensure long-term operational stability and reliability within liquid-cooled environments.

5. Application Scenarios for C-LIGHT Optical Transceivers in Liquid-Cooled Data Centers

5.1 Intra-AI Cluster Interconnects (25G/100G)

Within AI computing clusters, high-speed interconnections between GPU servers and switches impose stringent requirements on optical transceiver bandwidth, latency, and cooling performance. C-LIGHT's 25G SFP28 and 100G QSFP28 series products are widely used for uplink connections from GPU servers to Top-of-Rack (ToR) switches. In liquid cooling deployment scenarios, these lower-rate transceivers can be deployed directly within immersion-cooled racks using sealing adaptation solutions, or deployed via air-liquid hybrid schemes on the switch side within cold plate liquid-cooled environments.

5.2 Data Center Spine-Leaf Networks (400G)

In data center Spine-Leaf network architectures, interconnections between Leaf switches and Spine switches typically utilize 400G optical transceivers. C-LIGHT's 400G QSFP-DD/OSFP series products (covering specifications such as SR8, DR4, FR4, LR4) meet various distance requirements for inter-rack, inter-building, and campus-level interconnects. In cold plate liquid-cooled data centers, the typical 12W power consumption of 400G transceivers can be effectively managed by the switch's liquid cooling system, achieving efficient rack-level heat dissipation.

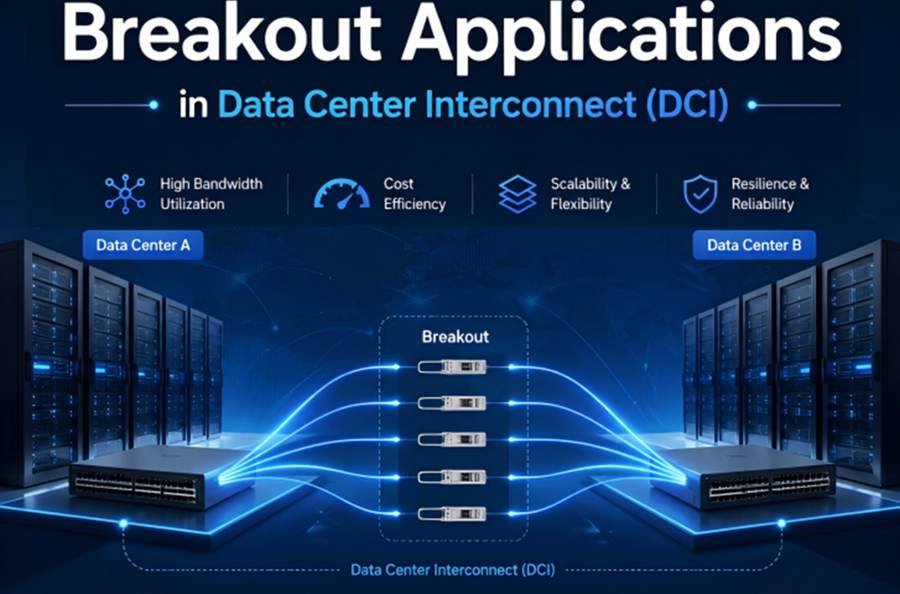

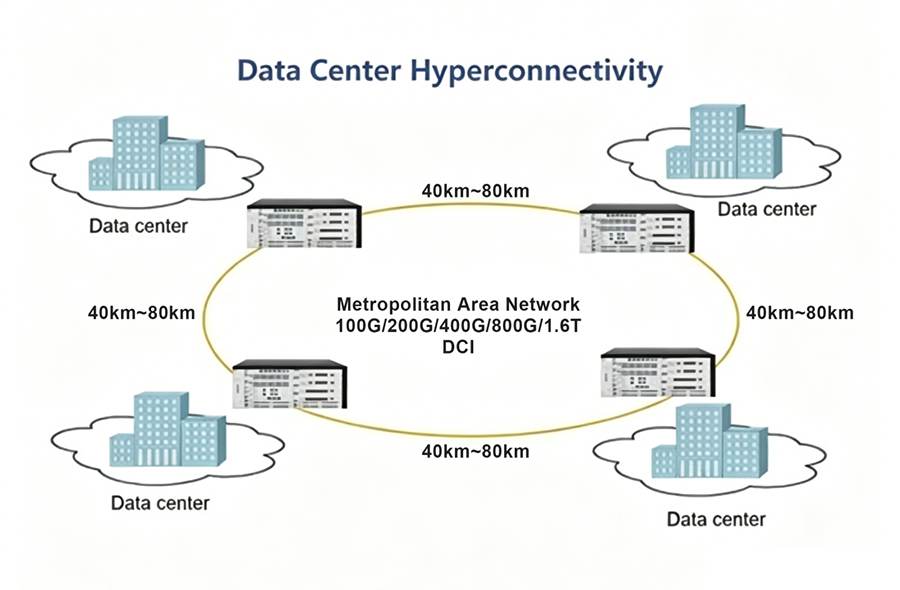

5.3 AI Cluster High-Speed Interconnects and DCI (800G)

In thousand-card large model training scenarios, communication bandwidth between GPUs directly affects training efficiency. C-LIGHT's 800G SR8/VR8 modules provide 800G full-duplex communication, enabling sustained, stable, high-bandwidth, low-latency transmission within liquid-cooled environments. For Data Center Interconnect (DCI), C-LIGHT's 400G ZR+ and 800G ZR+ coherent optical transceivers support long-haul transmission distances from 120km to over 450km. Leveraging coherent DCO technology combined with liquid cooling solutions, these products provide a highly reliable optical transmission platform for interconnecting geographically distributed data centers.

6. Conclusion

As the demand for AI computing power continues to surge, data center liquid cooling technology is transitioning from pilot applications to large-scale deployment. Cold plate liquid cooling currently dominates the market, while immersion cooling represents the future direction, together driving innovation in data center cooling solutions. In this context, the ability of optical transceivers—core components of network interconnection—to synergistically adapt to liquid cooling systems has become a critical dimension of product competitiveness.

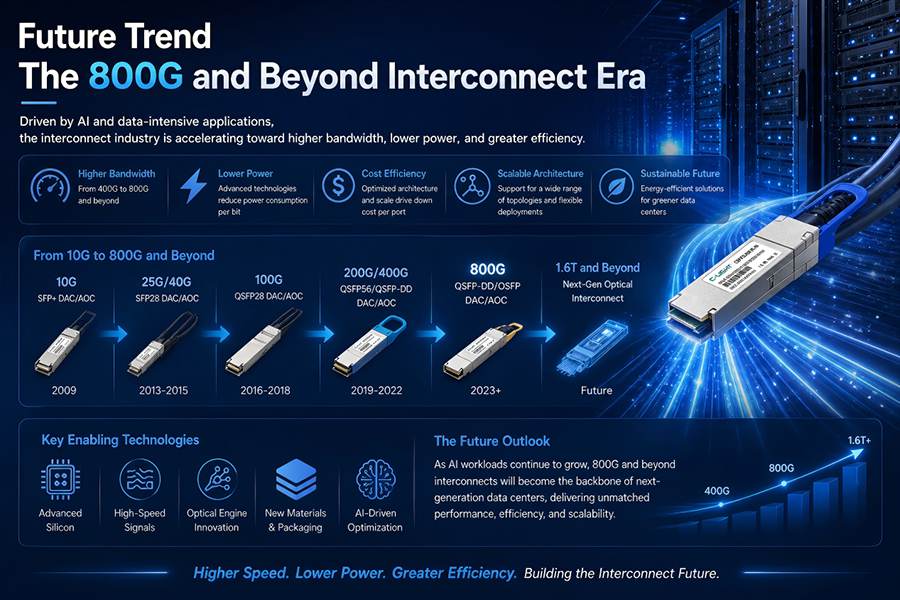

Leveraging 15 years of technical expertise and a comprehensive product line layout, C-LIGHT has established mature product capabilities across its entire 25G/100G/400G/800G optical transceiver portfolio. Addressing the cooling challenges of liquid-cooled data centers, C-LIGHT optical transceivers are continuously optimized in terms of material selection, structural design, and environmental adaptability to ensure stable operation across diverse liquid cooling scenarios, including cold plate and immersion cooling. The company's 100% factory inspection coverage and three-tier quality control system provide a solid guarantee of product reliability. Looking ahead, as technologies evolve toward 800G Ethernet and 1.6T, C-LIGHT will continue to drive R&D innovation to meet the demands of the AI era for high bandwidth, low power consumption, and highly reliable optical interconnects, contributing to the advancement of liquid-cooled data center construction toward higher energy efficiency levels.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>