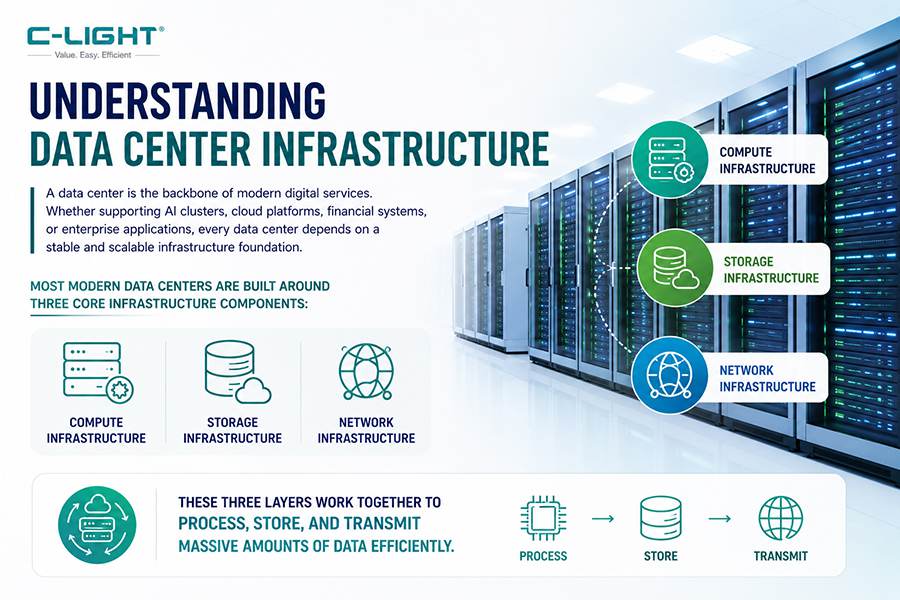

The data center is the physical cornerstone of the modern digital economy. Serving as the underlying carrier for cloud computing, AI training and inference, big data analytics, and a wide array of internet services, a data center is a highly precise computing ecosystem where various components work in tight coordination. Understanding the basic components of a data center is the starting point for grasping how modern information infrastructure operates efficiently, reliably, and at scale.

From a physical architecture perspective, a standard data center primarily consists of the following core components: Servers (Compute Resources), Storage Systems, Networking Equipment, Cabling and Interconnect Facilities, Power Systems, Cooling Systems, and Security and Management Facilities. These components do not operate in isolation; rather, they collaborate as an integrated whole through standardized racks, efficient interconnect architectures, and intelligent software management.

I. Servers: The Computing Engine of the Data Center

Servers are the computational core of the data center, responsible for handling all processing tasks, running applications, and hosting services. Traditionally, servers exist as standalone physical devices, including rack servers, blade servers, and tower servers. The core components of each server include:

- Processors (CPU/GPU/DPU): The Central Processing Unit handles general-purpose computing tasks, while in recent years, Graphics Processing Units (GPUs) and Data Processing Units (DPUs) have assumed increasingly critical roles in AI training and High-Performance Computing (HPC) clusters.

- Memory (RAM): Provides high-speed temporary data storage for running applications and the operating system.

- Local Storage (HDD/SSD): Provides operating system boot capability and local data caching.

- Power and Cooling Modules: Ensure the stable operation of the server.

In modern hyperscale data centers, servers are typically deployed at high density in standardized rack units. A single rack can house dozens of servers, connecting to the data center network via Top-of-Rack (ToR) switches.

II. Storage Systems: The Persistent Warehouse of Data

If servers are the "computing center," then storage systems are the "data bank." Data centers require large-scale, highly reliable storage solutions to preserve vast amounts of business data, user information, and log files. Storage systems are primarily categorized as follows:

- Direct-Attached Storage (DAS): Storage devices connected directly to a single server, suitable for small-scale deployments.

- Network-Attached Storage (NAS): Provides file-level data access over standard Ethernet, allowing multiple servers to share storage resources.

- Storage Area Network (SAN): Connects storage devices to servers via dedicated high-speed Fibre Channel or Ethernet, providing block-level data access. This is ideal for mission-critical business systems with stringent performance and reliability requirements.

With the proliferation of Solid-State Drives (SSDs) and the maturation of NVMe over Fabrics technology, storage latency in data centers has evolved from milliseconds to microseconds, providing critical support for scenarios such as real-time analytics and AI inference.

III. Networking Equipment: The Highway for Data Flow

Network infrastructure determines the efficiency and latency of data movement between servers, between servers and storage, and between the data center and the outside world. Data center networks typically employ a hierarchical architecture, primarily including:

- Core Layer: The central nervous system of the data center network, providing high-speed data switching and external connectivity, typically utilizing high-throughput modular switches.

- Aggregation Layer: Connects multiple access layer switches, performing traffic aggregation and policy control.

- Access Layer: Also known as Top-of-Rack (ToR) switches, these connect directly to servers, providing first-hop network access.

- Leaf-Spine Architecture: Modern hyperscale data centers widely adopt this flat, non-blocking architecture, where every Leaf switch is fully interconnected with every Spine switch to support massive East-West traffic flows among thousands of GPUs in AI clusters.

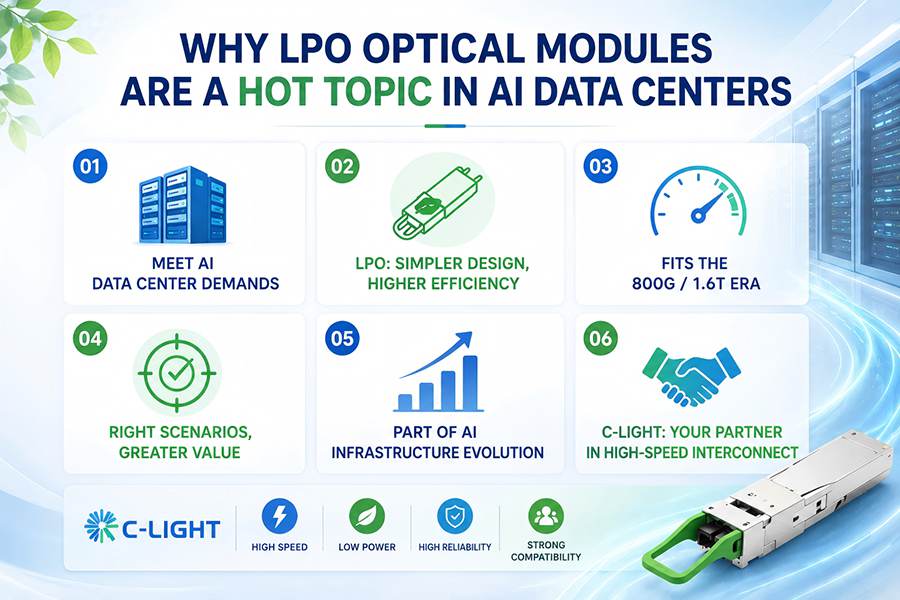

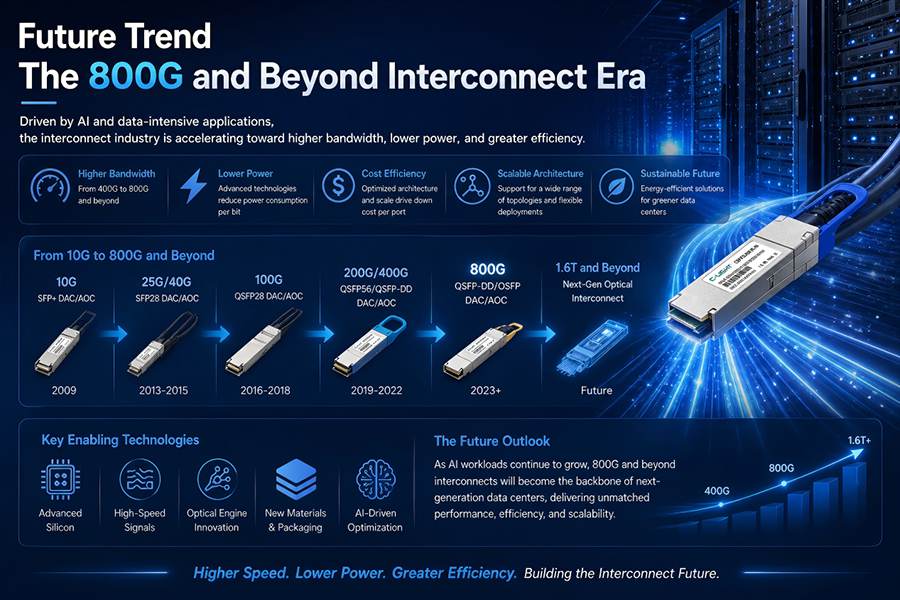

In the physical interconnection of network devices, optical transceivers serve as the critical components converting electrical signals to optical signals. Their speed and performance directly determine the total throughput capacity of the data center network. With the explosive growth in bandwidth demand driven by AI data centers, 800G optical transceivers have become the standard for next-generation large-scale clusters. Industry data indicates that global shipments of 800G transceivers are expected to double in 2025, with total shipments of high-speed data communication transceivers at 400G and above reaching approximately 42 million units.

IV. Cabling and Interconnects: The Physical Link for Data Flow

The cabling system provides the physical pathways for data transmission between devices within the data center, covering everything from short-reach connections inside cabinets to medium and long-reach interconnects across racks. Key cabling and interconnect solutions in modern data centers include:

- Fiber Optic Cabling: Utilizes single-mode or multimode fiber for long-distance, high-bandwidth data transmission. In long-reach and ultra-high-bandwidth scenarios, fiber is the only viable option.

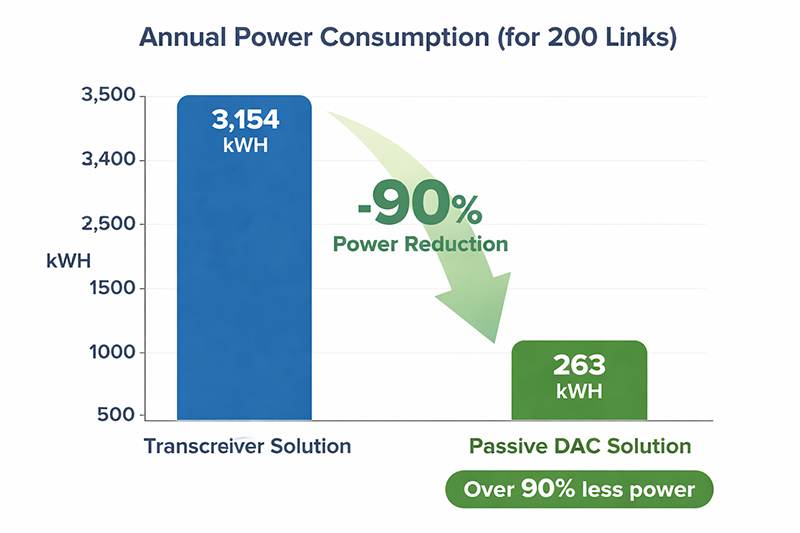

- Direct Attach Copper (DAC): Passive copper cables with fixed connectors on both ends. They offer extremely low cost, zero power consumption, and minimal latency, making them ideal for short-reach interconnects within a rack or between adjacent racks over distances of 3 to 7 meters.

- Active Optical Cables (AOC): Permanently encapsulate optical transceivers and fiber into a single unit. They combine the long-distance transmission capability of fiber (up to 100 meters or more) with the plug-and-play convenience of a cable, making them an ideal choice for Middle-of-Row/End-of-Row interconnects.

In the field of cabling and interconnects, C-LIGHT offers a product portfolio covering full data rates from 10G to 800G. With 15 years of manufacturing experience in fiber optic network products, C-LIGHT's product line spans optical transceivers, AOCs, DACs, and DWDM/CWDM active and passive products across speeds including 800G, 2x400G, 400G, 200G, 100G, 50G, 40G, 25G, 10G, 1.25G, and 100M.

C-LIGHT 800G AOC Active Optical Cables are designed for hyperscale data centers and HPC applications. Utilizing the QSFP-DD form factor, they achieve ultra-high-speed data transmission of 800 Gbps over a 2-meter distance, require no external power supply, and offer true plug-and-play functionality. C-LIGHT's AOC products employ 850nm VCSEL lasers and PIN photodetectors, support multimode fiber transmission, and cover distances ranging from 0.5 to 30 meters, meeting the stringent demands for high-density, low-power interconnectivity in modern data center architectures.

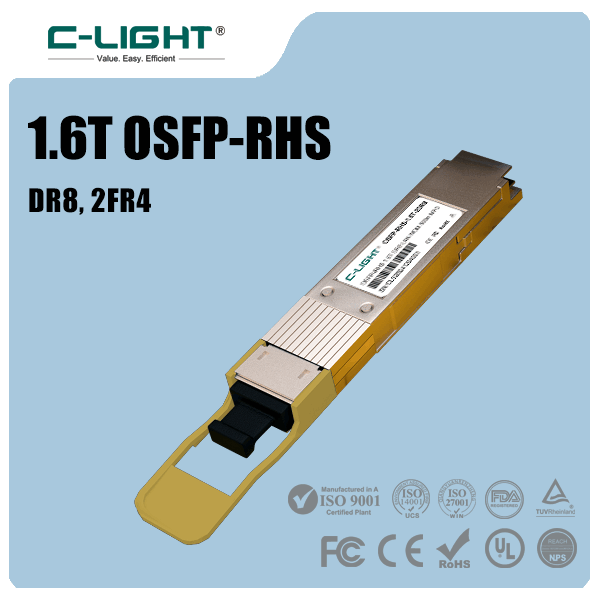

V. Optical Transceivers: The Decisive Component for Network Speed

Optical transceivers are the core devices enabling high-speed fiber optic communication within the data center. As the scale of AI computing clusters expands from thousands to tens of thousands or even hundreds of thousands of accelerators, optical interconnects are becoming a critical bottleneck affecting total system bandwidth and energy consumption. The current distribution of optical transceiver data rates in data centers is as follows:

- 100G and Below: Widely deployed in traditional enterprise data centers; mature technology.

- 400G: Currently the mainstream product with the broadest shipment volume and application coverage; the preferred solution for connecting GPU servers to Leaf switches. Deployment of 400G and higher-speed transceivers grew by over 250% year-over-year in 2024.

- 800G: Entering a phase of high-volume ramp-up in 2025, with shipments expected to grow over 100% year-over-year. Used for connecting Spine and Leaf switches, as well as high-speed interconnects within GPU clusters.

- 1.6T: Next-generation technology standard expected to enter a rapid growth trajectory by 2026, with shipments potentially exceeding 5 million units that year.

C-LIGHT possesses deep technological expertise in data center optical transceivers, offering a full range of solutions from 25G to 800G. The company has developed and produced over 1,000 optical module product variants, all certified to multiple international and domestic standards.

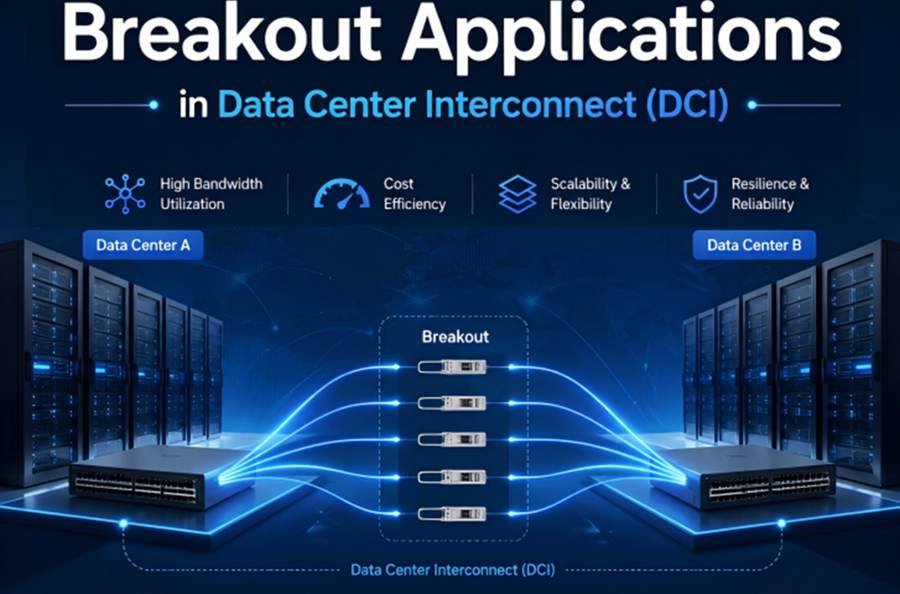

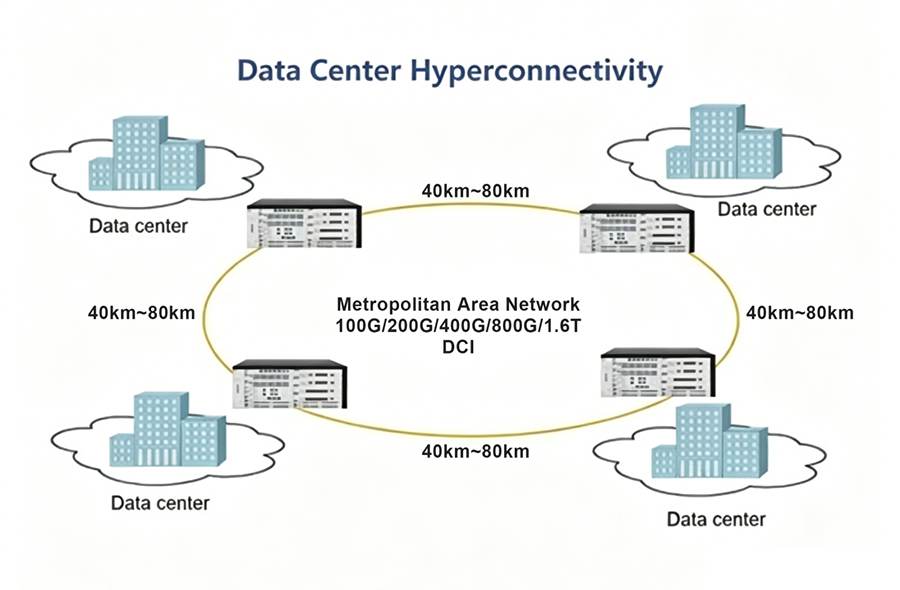

In high-speed data center scenarios, C-LIGHT's 800G/400G series optical transceivers enable data center backbone networks to achieve 25.6 Tbps switching bandwidth, efficiently handling East-West traffic forwarding among thousands of GPUs in large-scale AI training clusters. Furthermore, C-LIGHT has introduced specialized 25G/100G/400G/800G series optical transceiver solutions tailored for liquid-cooled data center environments, adapting to the specific operating temperatures and heat dissipation conditions of high-density liquid-cooled server racks. Additionally, C-LIGHT's 400G ZR QSFP-DD Coherent Optical Transceivers support transmission distances of up to 120 km, providing a low-cost, high-density DWDM transmission solution for Data Center Interconnect (DCI) scenarios.

VI. Power and Cooling Systems: The Guarantee of Stable Operation

Power and cooling are fundamental to the stable operation of a data center and represent some of the largest components of Operating Expenses (OPEX).

- Power Systems: Include utility power feeds, Uninterruptible Power Supplies (UPS), backup generators, and Power Distribution Units (PDUs). Modern data centers are typically designed with 2N or N+1 redundant architectures to ensure uninterrupted business operations in the event of any single power path or equipment failure. PDUs precisely distribute electrical power to each individual server and network device.

- Cooling Systems: With the dramatic rise in power consumption of AI chips, traditional air cooling struggles to meet the heat dissipation requirements of high-density racks. Liquid cooling technologies are gradually becoming mainstream, including cold-plate liquid cooling and immersion liquid cooling solutions, which can reduce Power Usage Effectiveness (PUE) from above 1.4 to below 1.1.

VII. Security and Management Systems: The Brain for Intelligent Operations

Beyond physical hardware components, data centers require a comprehensive suite of software management systems to ensure security, monitor status, and enable automated operations.

- Physical Security: Includes access control systems, biometric authentication, video surveillance, and intrusion detection to prevent unauthorized physical access.

- Network Security: Includes firewalls, Intrusion Prevention Systems (IPS), and Distributed Denial-of-Service (DDoS) mitigation appliances to protect the data center from cyberattacks.

- Management Software: Includes Data Center Infrastructure Management (DCIM) platforms and Software-Defined Networking (SDN) controllers. DCIM systems monitor rack temperature, power loads, and equipment status in real-time, while SDN technology decouples the network control plane from the data forwarding plane, making network configuration and optimization highly automated and programmable.

An Intelligent Computing Foundation Driven by Component Synergy

The basic components of a data center—from servers and storage to networking equipment, cabling, optical transceivers, power, cooling, and management systems—form a highly integrated and interdependent ecosystem. Technological evolution in any single component drives changes in the overall architecture: increased server compute density drives the adoption of liquid cooling; East-West traffic in AI clusters accelerates the deployment of Leaf-Spine architecture and 800G optical transceivers; and efficient optical interconnect components, such as C-LIGHT's 800G/400G optical transceivers and AOC/DAC cables, are becoming critical determinants of total data center bandwidth and energy efficiency.

As the parameter scale of large AI models continues to grow and real-time inference applications proliferate, the demand for optical interconnect bandwidth in data centers is rising rapidly. According to forecasts, the market for high-speed data communication optical transceivers at 400G and above will approach $30 billion by 2029, with cost per bit of bandwidth continuing to decline. In this context, building efficient, reliable, and scalable data center infrastructure relies on collaborative, full-stack innovation—from chips to servers, from switches to optical modules, and from cabling to cooling systems.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>