High-speed cables are the "neural network" of NVIDIA AI clusters, directly impacting inter-GPU communication efficiency and cluster scale.

Application Relationship and Architecture Evolution

Traditional DGX/HGX Systems: Primarily rely on DAC for GPU-to-switch (NVSwitch) interconnect within a chassis or between very short racks, pursuing the lowest latency and cost.

GB200 NVL72 Superchip System: Marks NVIDIA's deepening application of copper connections. Inside the "NVL72" rack, 72 GPUs are interconnected via high-speed copper cables (currently mainly DAC) across a full backplane, forming a massive logical GPU. This highlights the irreplaceable cost and power advantages of copper connections under ultra-short distance, extreme bandwidth demands.

Large Cloud Service Provider (CSP) Scale-Up Clusters: Such as Amazon's Trainium2 Trn2-Ultra64 cluster, widely adopting AEC for inter-rack connections. This reflects another industry trend: to optimize Total Cost of Ownership (TCO) and supply chain elasticity, CSPs increasingly prefer a disaggregated model—purchasing GPUs from NVIDIA and sourcing connection components like AEC from other suppliers (e.g., Credo, Amphenol).

Supply Chain and Main Models

Main Models in NVIDIA Solutions: NVIDIA's official solutions often specify the use of passive DAC (e.g., specific cables for NVLink connections) and some ACC. The copper cable connectors used in its GB200 system mainly come from suppliers like Amphenol and Molex.

Leaders and Models in AEC: Credo is a key promoter and standards contributor for AEC technology. Its HiWire series AEC products are industry benchmarks, supporting 400G/800G and even 1.6T rates. Another chip giant, Marvell, also provides key Retimer chip solutions.

Brief Analysis:

NVIDIA leads a "tightly integrated" path (GPU + NVLink + dedicated copper cables) pursuing ultimate performance. CSPs are driving a "disaggregated" path (custom/3rd-party ASIC + AEC) pursuing cost, power efficiency, and supply chain security. These two paths develop in parallel, jointly driving innovation and market expansion for high-speed interconnect technologies like AEC. According to industry forecasts, the global AEC market is expected to grow at a CAGR of approximately 45% from 2023 to 2028, becoming the fastest-growing segment in the data center interconnect market.

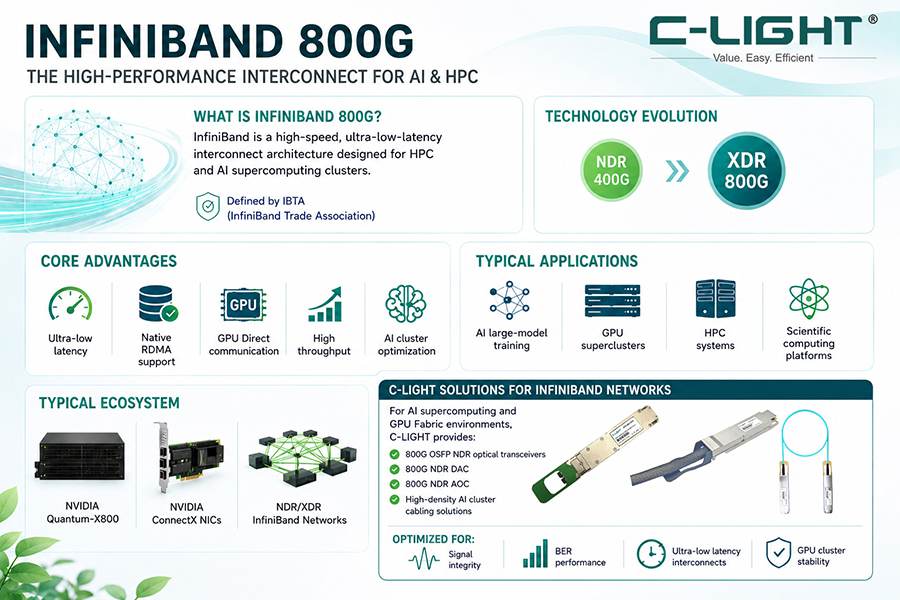

NVIDIA's AI supercomputers impose stringent requirements on interconnect technology, and its product roadmap directly influences the development direction of high-speed cable technology.

In NVIDIA's latest GB200 NVL72 rack architecture, copper cables play a key role. This architecture interconnects 72 Blackwell GPUs using NVLink, employing over 5,000 NVLink copper cables with a total length exceeding 2 miles. These cables are primarily used for high-speed interconnects between GPUs within the rack.

NVIDIA offers a comprehensive high-speed cable product line, including Mellanox LinkX InfiniBand DAC copper cables, described as the lowest-cost way to create high-speed, low-latency links in InfiniBand switch networks and NVIDIA GPU-accelerated AI systems.

Regarding technology roadmap, NVIDIA has expanded from primarily focusing on DAC and ACC to also beginning to develop 1.6T AEC solutions. AEC only adds Retimer chips at both ends of the cable compared to DAC; the copper cable itself remains similar, but transmission distance and signal quality are significantly improved.

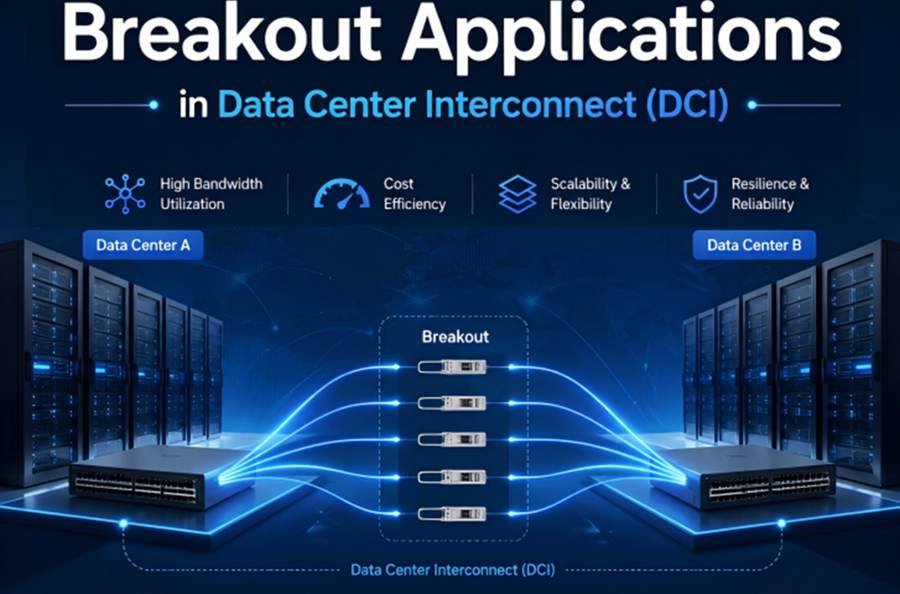

Different interconnect solutions have distinct application scenarios in NVIDIA architectures: DAC/ACC/AEC are mainly used for intra-rack GPU-to-GPU and GPU-to-switch connections; while AOC and traditional optical modules are more often used for inter-rack connections.

As AI cluster scales expand, AEC offers advantages over traditional DAC in large-scale networking cabling due to its smaller outer diameter and lower space occupancy.

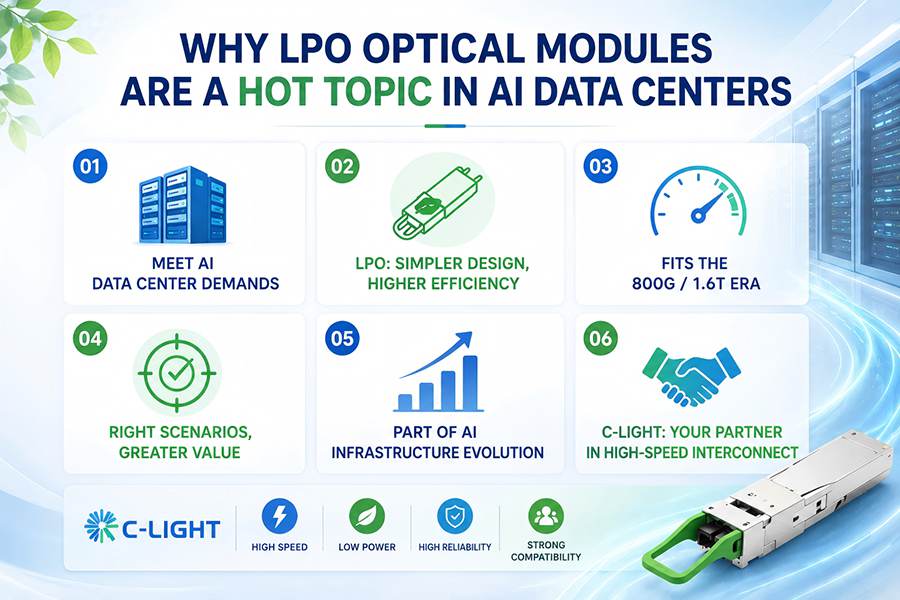

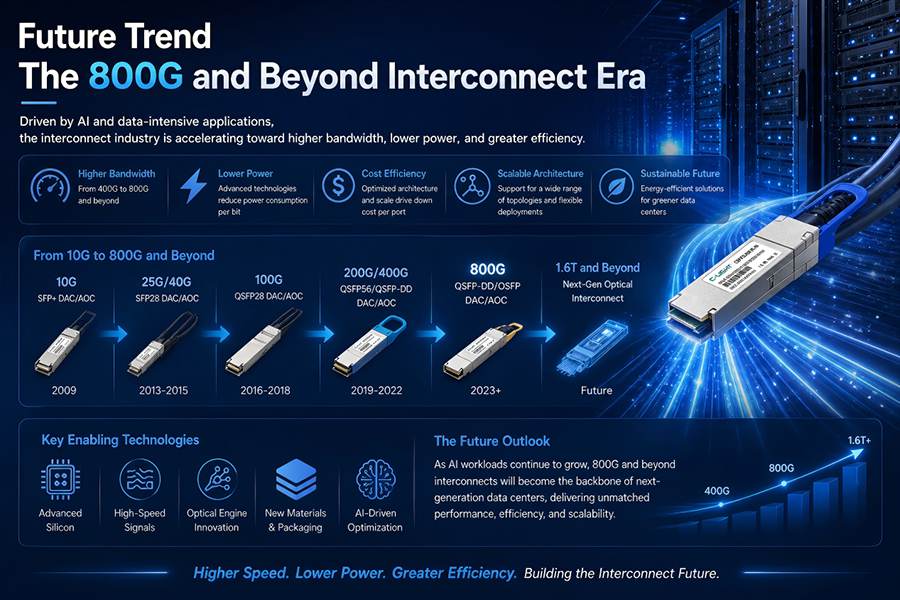

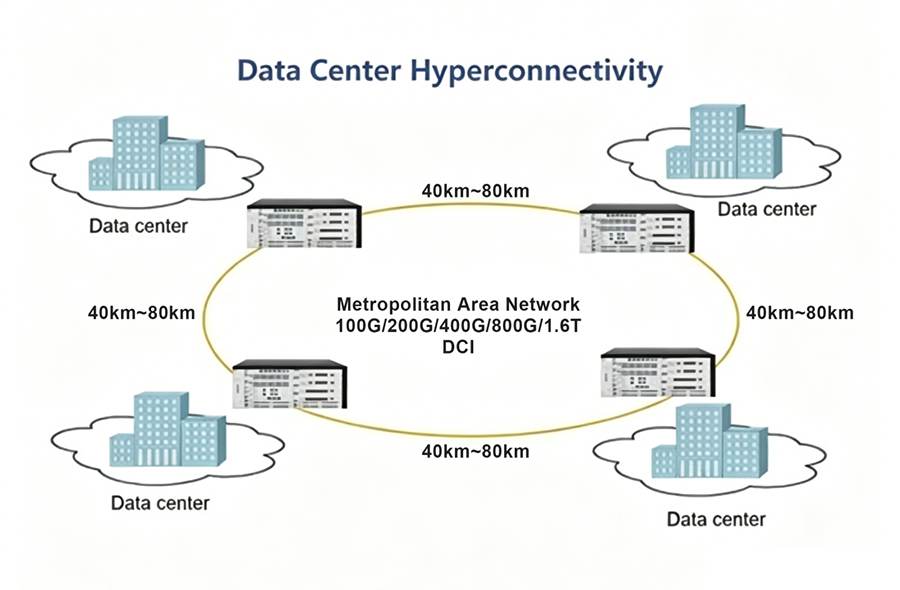

AOC, DAC, AEC, and ACC each occupy distinct and well-defined ecological niches, collectively forming the high-speed data transmission network—from within a chassis to across the data center, from electrical to optical. There is no absolute "best" choice, only the "most suitable" one. In today's white-hot AI computing race, the evolution of interconnect technology has become as crucial as the improvement in GPU computational performance. In the future, with the advent of the 1.6T and 3.2T era, higher-speed AOC based on Silicon Photonics and more highly integrated AEC will become mainstream. The hybrid use of copper and optical technologies will continue to provide architects of computing clusters with optimized solutions within the "impossible triangle" of performance, cost, and power consumption.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>