The introduction of XPO represents a major leap forward in the form factor of pluggable optical modules. With 4× the front‑panel density of OSFP, integrated liquid‑cooling thermal management, high reliability through hardware simplification, and flexible multi‑interface options, XPO systematically addresses the extreme demands that AI data centers place on optical interconnects.

I. Introduction

In March 2026, Arista, a leading networking equipment provider, together with over 45 industry partners, officially released the white paper on eXtra-dense Pluggable Optics (XPO), proposing a new standard for pluggable optical modules tailored for next-generation AI data centers.

II. Five Key Challenges for Optical Interconnects in AI Data Centers

According to the white paper, AI data centers present five core requirements for optical interconnects:

- Ultimate Bandwidth. Distributed training of large-scale AI models requires building non-blocking, high-radix switching fabrics that support simultaneous communication among tens of thousands of accelerators, far exceeding the bandwidth demands of traditional cloud data centers.

- High Reliability. In a large-scale AI fabric with over 50,000 optical links, component failures are statistically inevitable. A single module failure can interrupt a multimillion-dollar training job, resulting in wasted compute power and scheduling disruptions.

- Liquid Cooling Compatibility. The heat load generated by the compute density of modern AI accelerators has surpassed the capabilities of air cooling. Hyperscale AI data centers are making liquid cooling a mandatory infrastructure requirement. Any optical interconnect solution that cannot efficiently integrate with a liquid-cooled environment is unsuitable for next-generation deployment.

- Power Efficiency. High-density racks operate within limited power budgets. Every watt consumed by networking means one less watt for computing. Therefore, transmission power per bit must be significantly reduced.

- Maximum Density. Current OSFP modules support only 32 modules per 1U of rack space. Insufficient density forces network architects to deploy larger, multi-tier topologies, leading to higher latency, cost, and cabling complexity.

Faced with these five challenges, the widely adopted OSFP modules are no longer optimal for AI-driven data center requirements. Although CPO and OBO have been proposed to improve bandwidth density, they still face significant hurdles in field maintainability, scalability, and manufacturing yield, making widespread deployment in hyperscale environments difficult. Against this backdrop, XPO emerges to fill a critical gap.

III. Module Specifications and Core Architecture

3.1 Basic Technical Parameters

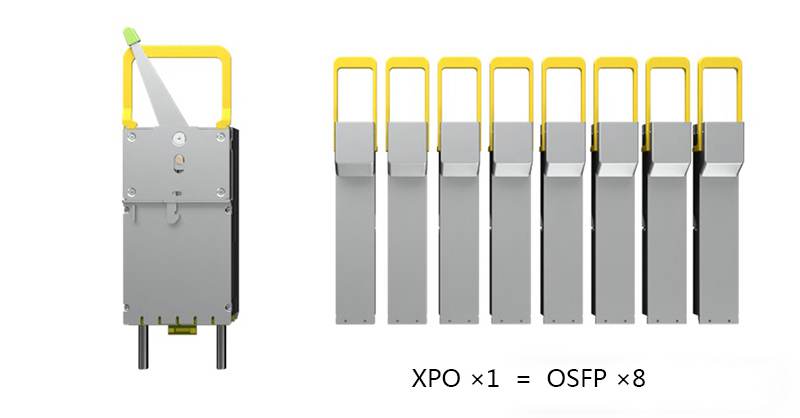

A single XPO module delivers 12.8 Tbps of bandwidth via 64 electrical lanes at 200 Gbps each. The module dimensions are 60.8 mm (W) × 111.8 mm (L) × 21.3 mm (H). Its width is approximately 2.7 times that of an OSFP module, but its per-module bandwidth is 8 times that of OSFP. In one Open Rack Unit (1OU), an XPO-based system achieves 204.8 Tbps of switching capacity, delivering a 4× improvement in front-panel density over the existing OSFP standard. A single XPO module can replace the workload of eight OSFP modules.

3.2 “Belly-to-Belly” Dual-PCB Design

XPO abandons the single-PCB layout of traditional optical modules and adopts an innovative “Belly-to-Belly” dual-card design. The module houses two independent 32-channel PCBs, Card 1 and Card 2, arranged face-to-face facing inward.

The brilliance of this architecture lies in centralized thermal zone management. High-power, high-heat components (such as transmit circuits and laser drivers) are concentrated on the inward-facing “hot” side, while low-power components (such as receive circuits and control logic) are placed on the outward-facing “cold” side. This layout optimizes the heat dissipation path, allowing high-heat-generating devices to make more efficient contact with the cold plate, thereby supporting higher power density without increasing module size.

3.3 Flexible Interface Options

The XPO module architecture offers high deployment flexibility, supporting fully retimed, half-retimed, and linear optics interface schemes, accommodating a wide range of scenarios from short‑reach to long‑reach, and from low‑cost to high‑performance. The module can host DR/FR/LR/SR and high-power coherent modules such as 8-channel 1600G‑ZR.

It is particularly worth noting that XPO leverages the existing 200G/lane optical and electrical chip ecosystem, requiring no new chip development. The pin definition is compatible with current 8‑channel chip designs, enabling rapid volume production. This design reduces industry transition costs and paves the way for fast, large‑scale deployment of XPO.

IV. Liquid Cooling and Thermal Management Technology

Liquid cooling integration is one of the most distinctive technical features of XPO. The XPO module integrates an internal liquid‑cooled cold plate, supporting over 400 W of power dissipation per module. The two 32‑channel paddle cards share a common cold plate, capable of cooling both low‑power and high‑power optical modules (e.g., 8×1600G‑ZR/ZR+).

All large‑scale AI data centers will adopt liquid cooling, and switches deployed in these data centers also require liquid cooling. Although a liquid‑cooled cold plate can be added to a flat‑top OSFP module, it does not significantly improve thermal performance. XPO fundamentally solves the heat dissipation challenge in high‑density deployments by integrating the cold plate directly inside the module.

V. Hardware Simplification and Reliability Improvement

While increasing density, XPO dramatically improves system reliability through hardware simplification. Each 32‑channel paddle card contains only one microcontroller and one set of voltage converters, reducing the number of common components by 75% compared to four OSFP modules. Lower hardware complexity directly translates into a lower failure rate.

It is estimated that in a 400 MW AI data center equipped with 1,024 GPU racks (totaling 128,000 GPUs), adopting XPO can reduce the number of switch racks by 75% and save over 44% of floor space. This not only significantly lowers infrastructure costs including power distribution, piping, and installation but also accelerates deployment time.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>